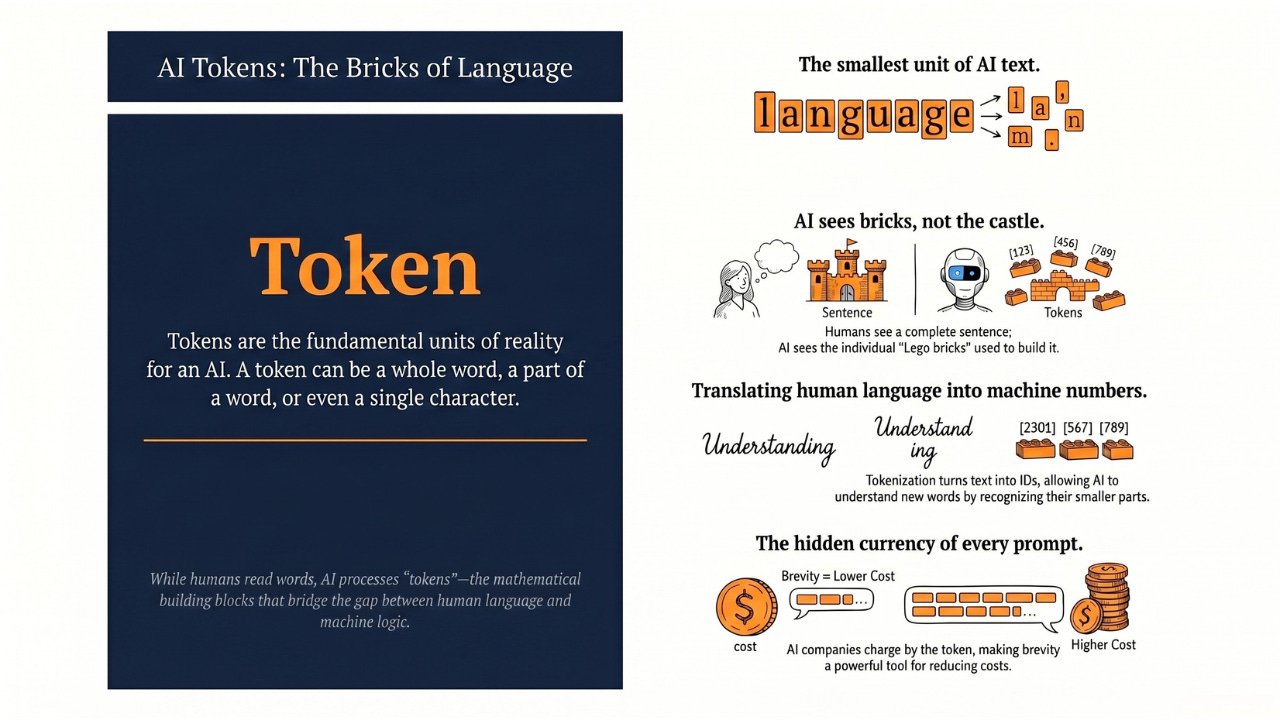

A Token is the smallest unit of text an AI processes — a whole word, part of a word, or even a single character. It is the bridge between human language and the numbers AI actually understands.

Hey Common Folks!

Over the last two articles, we covered what a prompt is and how to write a good one. That was about how to talk to AI. Now we look at the other side of that conversation: how AI actually reads what you typed.

If you have ever looked at the pricing page for ChatGPT or Claude, or seen an error message saying “Token limit exceeded,” you have probably scratched your head. Why don’t they just count words? Why this fancy term “Token”?

It turns out, computers don’t read the way we do. To an AI, a Token is the fundamental unit of reality.

What is a Token?

A token is a chunk of text. It can be a whole word (”apple”), part of a word (”smart” + “phones”), a piece of punctuation, or even a single character.

Think of tokens as the atoms of language. Just as a molecule of water is made up of atoms (Hydrogen and Oxygen), a sentence is made up of tokens.

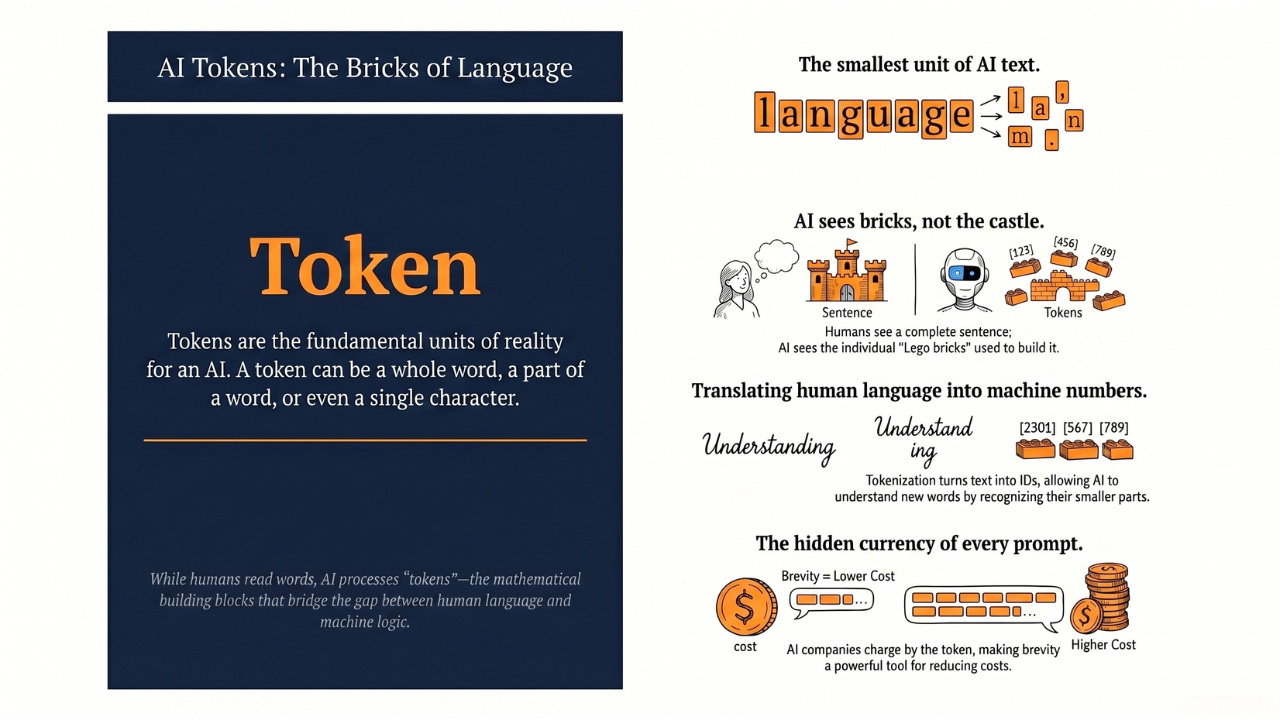

The Analogy: The Lego Castle

Imagine you have a beautiful Lego castle (a sentence).

-

For Humans: We look at the castle and say, “That’s a castle.” We read the whole word.

-

For AI: The AI looks at the individual plastic bricks used to build it.

Sometimes, a single brick is a whole window (a whole word like “apple”). Other times, to build a long wall (a complex word like “smartphones”), you need two bricks: “smart” and “phones.”

The AI doesn’t see the castle; it sees a pile of bricks. It processes those bricks one by one to understand the structure.

Why Not Just Use Words?

A fair question: “Why break ‘smartphones’ into two tokens? Why not just treat it as one word?”

Because computers don’t understand English. They understand numbers.

To teach an AI language, every piece of text first has to be converted into a list of numbers (called token IDs), which the AI later turns into rich mathematical representations called embeddings.

-

If we assigned a unique number to every single word in the English language, the list would be infinite and unmanageable. Every name, every typo, every made-up word would need its own slot.

-

By breaking complex words into smaller chunks (tokens), the AI can understand words it has never seen before by recognizing their parts.

If the AI knows “smart” and it knows “phones,” it can understand “smartphones” without needing a separate definition for it.

How Does It Work? (The Tokenization Process)

Before your prompt hits the AI, a process called Tokenization happens.

-

Input: You type “I love AI.”

-

Chopping: The tokenizer chops this up. In a modern GPT-style tokenizer, it looks roughly like:

["I", " love", " AI", "."]— four tokens, including the leading spaces. (Real tokenizers are picky like that, and they bake the spacing into the tokens themselves.) -

Numbering: The system assigns a specific ID number to each chunk. Modern tokenizers have a vocabulary of tens of thousands to a few hundred thousand possible tokens, so real IDs are usually large numbers. We’ll use small ones below for illustration:

[40, 1842, 16124, 13]. -

Processing: The AI receives that list of numbers and asks itself the one question it knows how to answer — what number should come next? It picks the most likely next number based on the patterns it learned during training (we walked through that pattern-prediction in How AI Actually Learns). That predicted number is converted back into a piece of text, and then the AI does it again — one token at a time — until your full answer is built.

Want to see this live? Free tools like OpenAI’s tokenizer page or Anthropic’s token-counting API let you paste any text and watch how it gets split. It’s worth doing once — you will never look at a long prompt the same way again.

The “Currency” of AI

Why should you care about tokens? Because in the world of Generative AI,

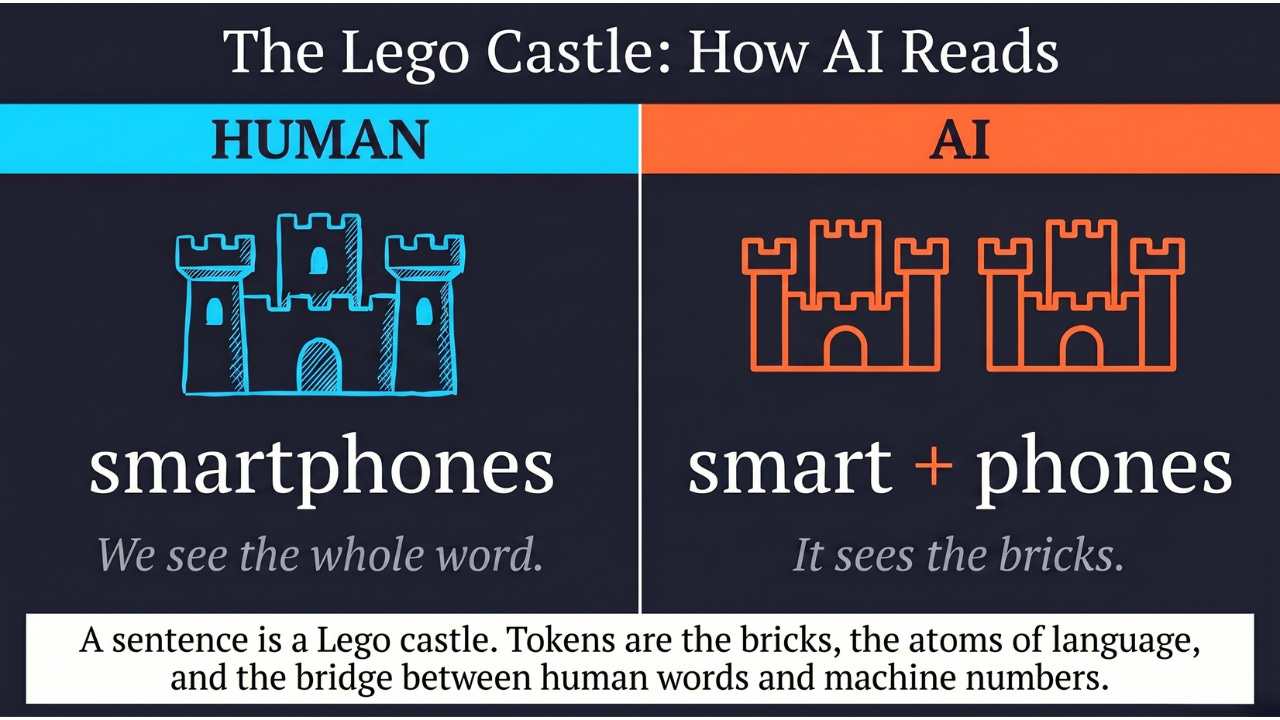

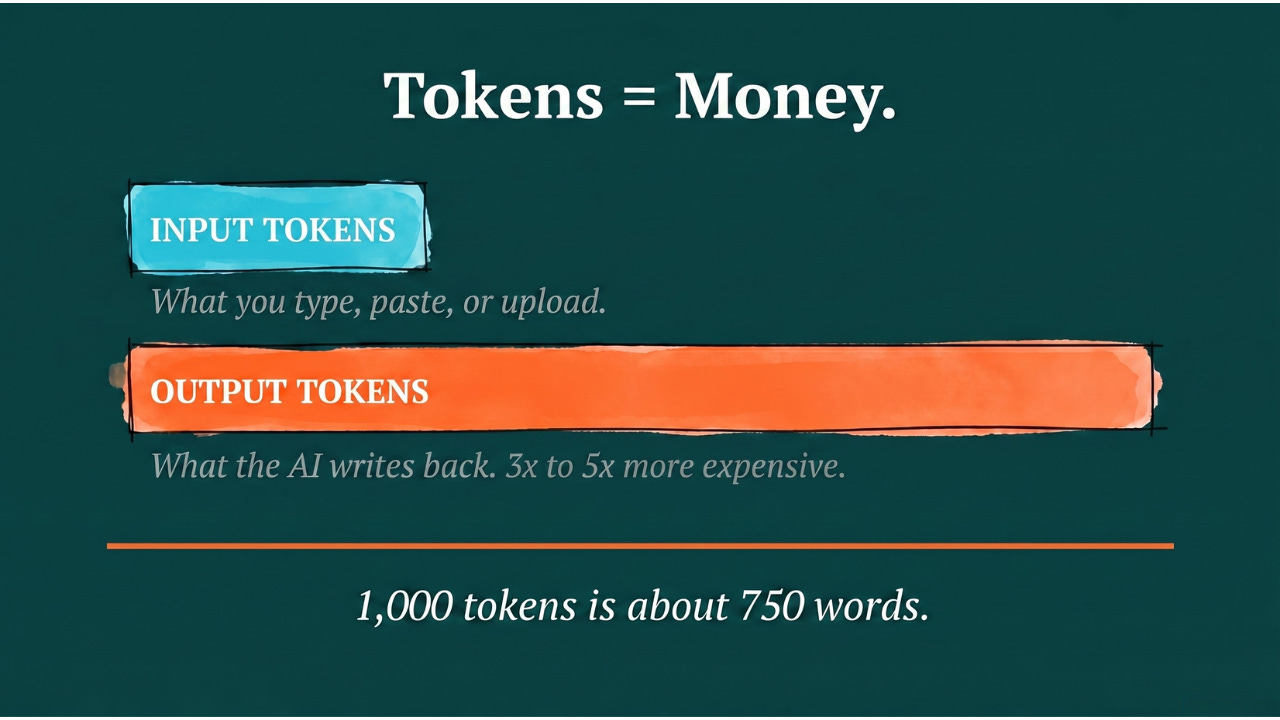

Tokens = Money.

When companies like OpenAI or Anthropic charge you, they don’t charge per question. They charge per token.

-

Input Tokens: what you type, paste, or upload into the chat.

-

Output Tokens: what the AI writes back.

A useful 2026 reality check: output tokens almost always cost more than input tokens — typically 3 to 5 times more — because generating a careful answer is harder for the model than reading one. So a long, rambling AI response costs you more than a short, precise one. Brevity in your prompt and in the format you ask for is a real lever on cost.

Roughly speaking, 1,000 tokens is about 750 words. So if you ask the AI to summarize a 50-page document, you are “spending” tokens to feed that document into the model, and spending more tokens to get the summary back out.

The good news for 2026: frontier models like Claude and Gemini now support context windows in the hundreds of thousands to over a million tokens. A 500-page novel can fit in a single prompt today — something that was impossible just two years ago. Tokens still cost money, but the ceiling on how much you can hand the AI in one shot has gone way up.

The Takeaway

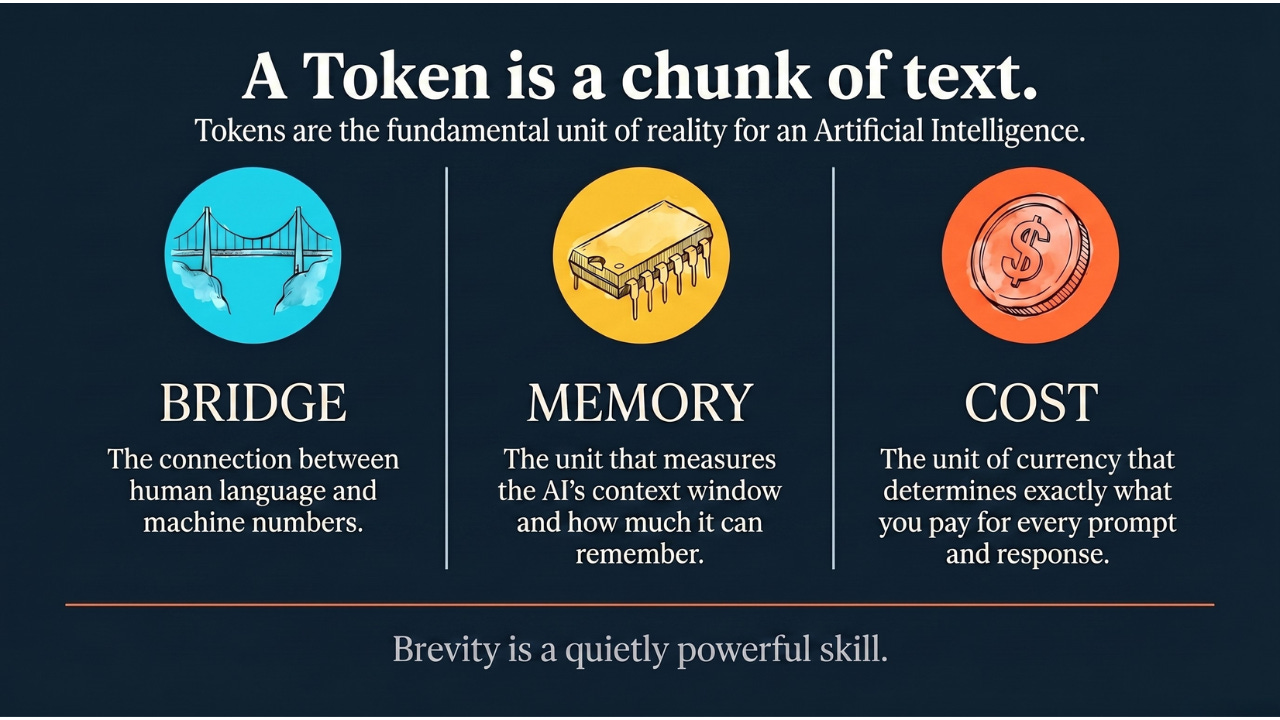

A Token is simply a chunk of text.

-

It is the bridge between human language and machine numbers.

-

It is the unit used to measure the size of the AI’s memory (the context window).

-

It is the unit used to calculate the cost of using the AI.

Understanding tokens explains why your long prompt sometimes gets cut off, why running a massive analysis costs a few dollars instead of a few cents, and why brevity in your prompts is a quietly powerful skill.

Coming Up

You now know how AI breaks your prompt into tokens. But where did it learn what those tokens actually mean in the first place? Next, we’ll dig into how AI gets its basic education — the massive pre-training phase where a blank-slate model reads a huge slice of the internet and starts to make sense of the world.

AI for Common Folks – Making AI understandable, one concept at a time.

Leave a Reply