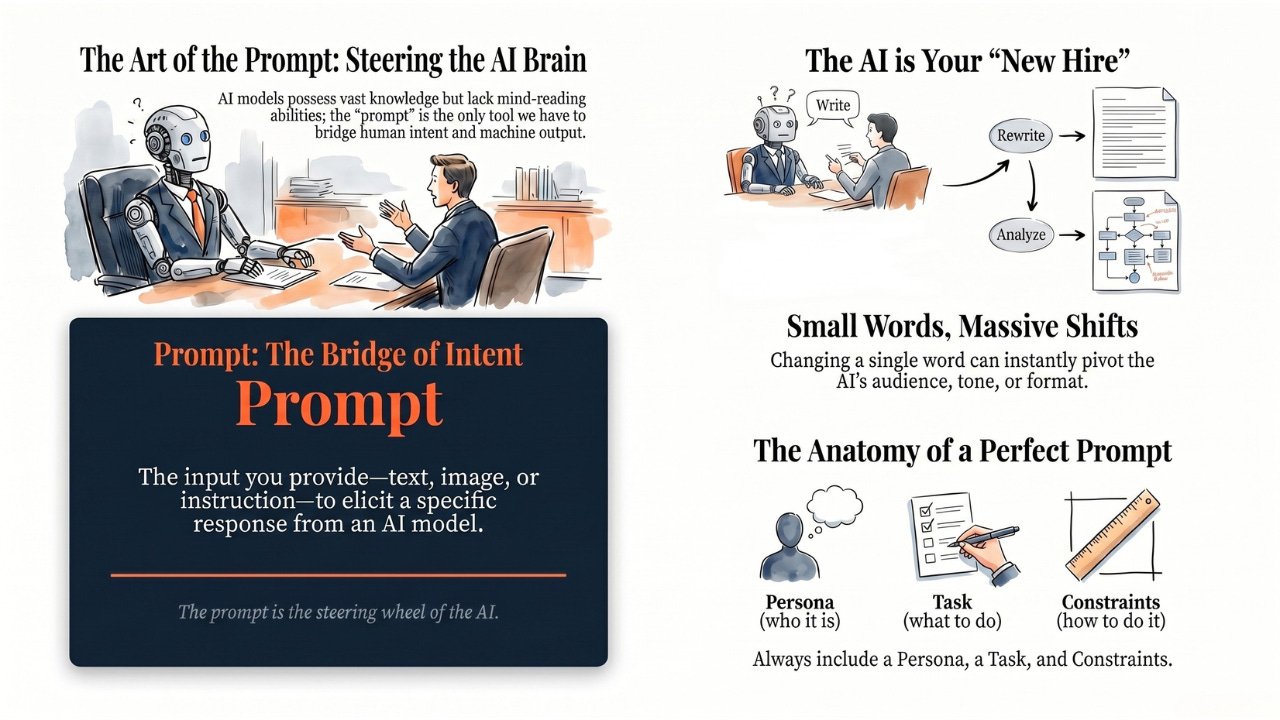

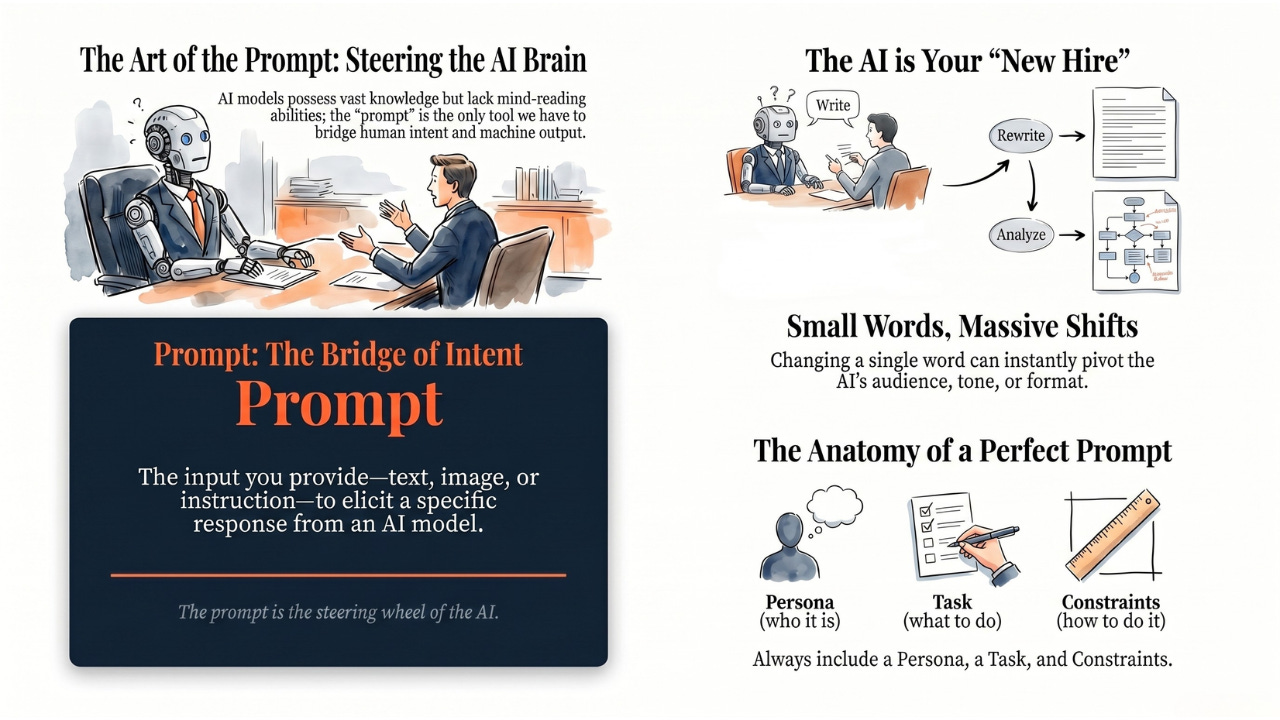

A Prompt is the input you provide to an AI model — text, image, voice, or document to get a specific response. It’s the bridge between human intent and machine output.

Hey Common Folks!

In our last article, we met the Large Language Model — the engine inside ChatGPT, Claude, and Gemini. Before that, we decoded GPT, and before that we introduced Foundation Models as the general-purpose AI brains powering today’s tools.

We know these AI models are incredibly powerful. But a Ferrari is useless if you don’t know how to drive it.

How do you actually talk to this super-brain? You don’t use Python code or binary zeros and ones. You use a Prompt.

And here’s the part most people miss: the same AI can give you a brilliant answer or a useless one based on nothing but how you asked. Same model. Same knowledge. Completely different output. That gap is what this article is about.

What is a Prompt?

In simple terms, a prompt is whatever you give the AI to get it to do something.

-

When you type a question into ChatGPT, that text is the prompt.

-

When you upload a screenshot of a spreadsheet and ask for key insights, that combination is the prompt.

-

When you paste a 50-page PDF and ask for a summary, the document plus the instruction is the prompt.

-

When you speak to AI through your phone, your voice is the prompt.

A prompt is the bridge between human intent and machine output. Everything you want, you have to express through it. The AI cannot read your mind. It can only work with what you give it.

The Analogy: Back to Our New Hire

Remember the new hire we met last article? The one who read the entire internet before day one?

They have all the knowledge in the world. But they don’t know you yet. They don’t know your style, your boss, your deadlines, your preferences. On day one, they need instructions for every single task, and the quality of your instructions determines the quality of their work.

-

Bad Prompt: “Write an email.”

-

The new hire thinks: To whom? About what? Angry tone? Professional? Long? Short?

-

The result: A generic, useless draft.

-

-

Good Prompt: “Write a polite email to my boss asking for two days of sick leave next week. Keep it under 50 words and don’t sound demanding.”

-

The new hire thinks: Got it. Topic, tone, recipient, length — all clear.

-

The result: A perfect, ready-to-send email.

-

The AI is that new hire. It has the capability to do almost anything, but it relies heavily on your instructions to know what to do, how to do it, and who it’s doing it for.

Why One Word Can Change Everything

You might have heard the term Prompt Engineering. It sounds fancy, but it just means “the art of asking correctly.”

A fair question to raise: in 2026, with ChatGPT, Claude, and Gemini so much more capable than they were a few years ago, does this skill still matter? The honest answer is yes, and the bar has moved. Early AI models needed near-magical phrasing to produce anything useful at all. Today’s models are far more forgiving — they’ll give you something even from a sloppy prompt. But the gap between something useful and exactly what you need still comes down to how you asked.

LLMs are sensitive to wording. Changing a single word in your prompt can completely change the answer you get back. Three quick examples:

One word changes the audience.

-

“Explain gravity.” → a physics textbook definition.

-

“Explain gravity to a 5-year-old.” → a story about falling apples.

One word changes the tone.

-

“Rewrite this email.” → the AI picks a tone for you, maybe the wrong one.

-

“Rewrite this email more politely.” → it keeps your meaning but softens the edges.

One word changes the format.

-

“Summarize this article.” → a paragraph.

-

“Summarize this article in bullet points.” → a scannable list.

Same AI. Same underlying knowledge. Completely different outputs. From one word of difference.

Why does this happen? Remember from the last article that the LLM predicts one word at a time, always choosing the most likely continuation of what came before. Your prompt is the “what came before.” When you change a word, you change the entire downstream probability of what word should come next. The AI isn’t being tricky. It’s responding exactly as designed to the pattern you gave it.

Same engine, same knowledge, completely different output. All from how you asked.

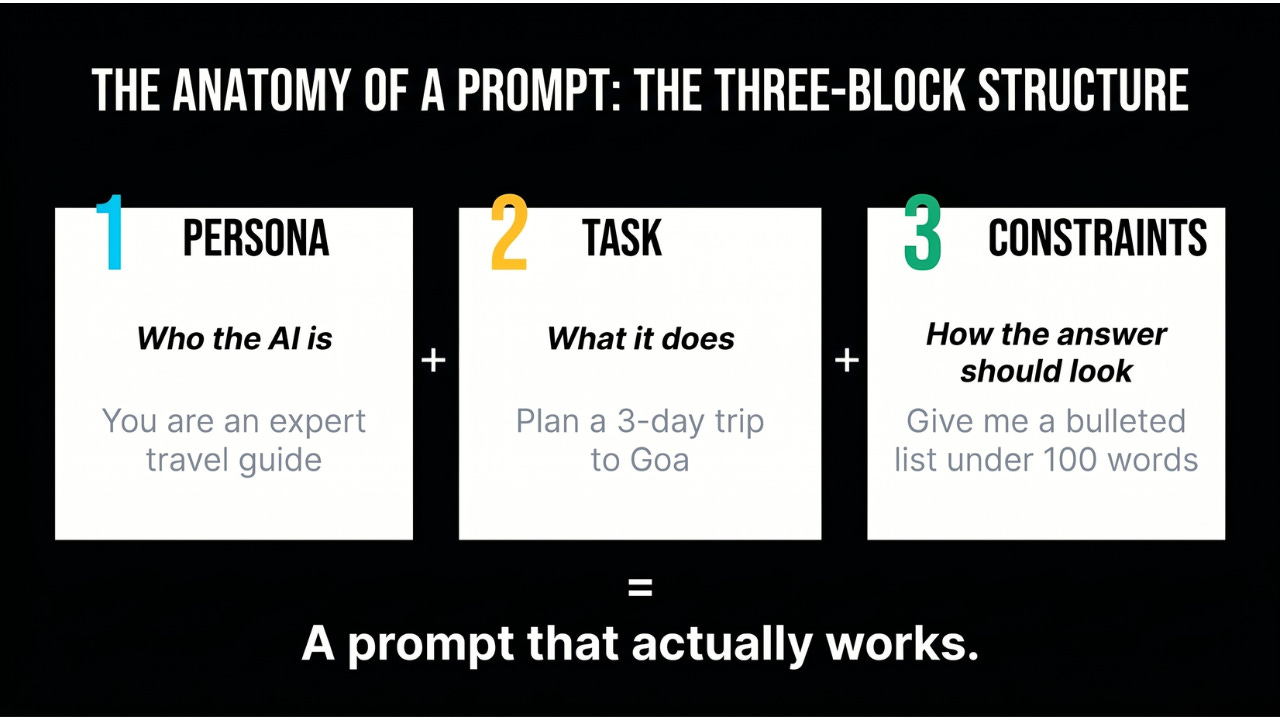

The Anatomy of a Prompt

Every strong prompt has three basic parts:

-

The Persona: Tell the AI who it is.

-

Example: “You are an expert travel guide” or “You are a Python coding tutor.”

-

-

The Task: Tell it what to do.

-

Example: “Plan a 3-day trip to Goa.”

-

-

The Constraints and Format: Tell it how you want the answer.

-

Example: “Give me the answer as a bulleted list” or “Keep it under 100 words.”

-

Stack all three together and the new hire knows exactly who they are, what they’re doing, and how the answer should look.

Most prompts people type skip one or two of these parts. That’s why most AI answers feel generic.

Bad Prompts vs Good Prompts: Three Real Examples

Here’s what that looks like in practice across three everyday tasks.

Writing

-

Bad: “Write a blog post about productivity.”

-

Good: “Write a 500-word blog post about productivity tips for remote workers. Use a conversational tone. Include three actionable tips. Start with a relatable scenario.”

Why the second works: The AI now knows the length, audience, tone, structure, and opening style. Five decisions you didn’t have to make yourself.

Research

-

Bad: “Tell me about climate change.”

-

Good: “Summarize the top three causes of climate change in simple terms a high school student would understand. Use bullet points. Keep it under 200 words.”

Why the second works: You’ve turned an infinite-scope question into a focused, scannable answer at a specific reading level.

Code

-

Bad: “Write Python code.”

-

Good: “Write a Python function that takes a list of numbers and returns the average. Include comments explaining each step. Handle the case of an empty list.”

Why the second works: The AI now has a specification — inputs, outputs, edge cases, and documentation. It produces code you can actually use.

In all three cases, the AI didn’t get smarter. The prompt did.

The Four Most Common Prompting Mistakes

Before we close, here are the four patterns that produce most of the frustrating AI experiences. Name them once, and you’ll start catching yourself doing them.

-

Being too vague. “Help me with my project” tells the AI nothing. No topic, no format, no outcome.

-

Not giving the AI the context it needs. “Fix this bug” without sharing the code, the error message, and what the code is supposed to do leaves the AI guessing. Being clear about what you want is not the same as giving the AI the raw material to do the job. Modern models are great, but they still can’t read your screen or your mind.

-

Giving no constraints. Without a word limit, audience, or format, the AI defaults to verbose and generic.

-

Expecting perfection on the first try for complex tasks. For simple asks, modern models often nail it immediately. But for anything nuanced — a layered analysis, a specific tone, a tricky coding problem — iteration is part of the skill, not a sign you’re doing it wrong.

The good news: each of these has a clean fix, and there are specific techniques that turn vague prompts into surgically precise ones. We’ll walk through all of them in the next article.

The Takeaway

A prompt is how we program modern computers using natural language instead of code.

-

It is the steering wheel of the AI.

-

It is the instructions you hand the new hire.

-

It is the difference between a useless generic reply and a precise, personalized answer.

The better you get at prompting, the smarter the AI seems to become. Not because the model changes, but because your instructions unlock more of what was always there.

Coming Up

Now you know what a prompt is and why it matters. But knowing what a steering wheel is doesn’t make you a driver. How do you actually get good at this? What separates the people who get brilliant AI answers from the ones who give up after a vague first try? Next, we’ll unpack the five techniques that turn any prompt into a great one — from role-playing to step-by-step thinking. That’s how you graduate from knowing about AI to actually getting what you want from it.

AI for Common Folks – Making AI understandable, one concept at a time.

Leave a Reply