Good morning, the godfather of deep learning just raised a billion dollars to build AI that learns from reality instead of text, Microsoft is plugging Anthropic directly into its office software, and Anthropic is now officially suing the Pentagon. Here’s what happened 👇

1. AI Godfather Yann LeCun Raises $1 Billion to Build a New Kind of AI

Yann LeCun — the Turing Prize-winning scientist who helped invent deep learning — just raised $1.03 billion for his new startup, AMI Labs (Advanced Machine Intelligence). The Paris-based company is valued at $3.5 billion before even shipping a product.

What’s he building? Something called “world models” — AI systems trained on how the physical world actually works, not just on text and images like today’s chatbots. LeCun has been saying for years that large language models (the technology behind ChatGPT and Claude) can’t truly reason or understand reality. Now he’s putting a billion dollars behind the alternative.

The investor list reads like an AI who’s who: Bezos Expeditions, NVIDIA, Samsung, Toyota Ventures, and Publicis Groupe all participated, alongside VCs Cathay Innovation, Greycroft, and Hiro Capital. LeCun left Meta at the end of 2025 after founding its legendary FAIR research lab. AMI’s CEO, Alexandre LeBrun, warned that “world models” is about to become the next buzzword — “In six months, every company will call itself a world model to raise funding.”

The first application area? Healthcare. AMI’s first disclosed partner is digital health startup Nabla, where hallucinations from today’s AI models could have life-threatening consequences. But long-term, LeCun sees this technology powering everything from autonomous robots to smart glasses. He’s even already talking to Meta about integrating world models into Ray-Ban smart glasses.

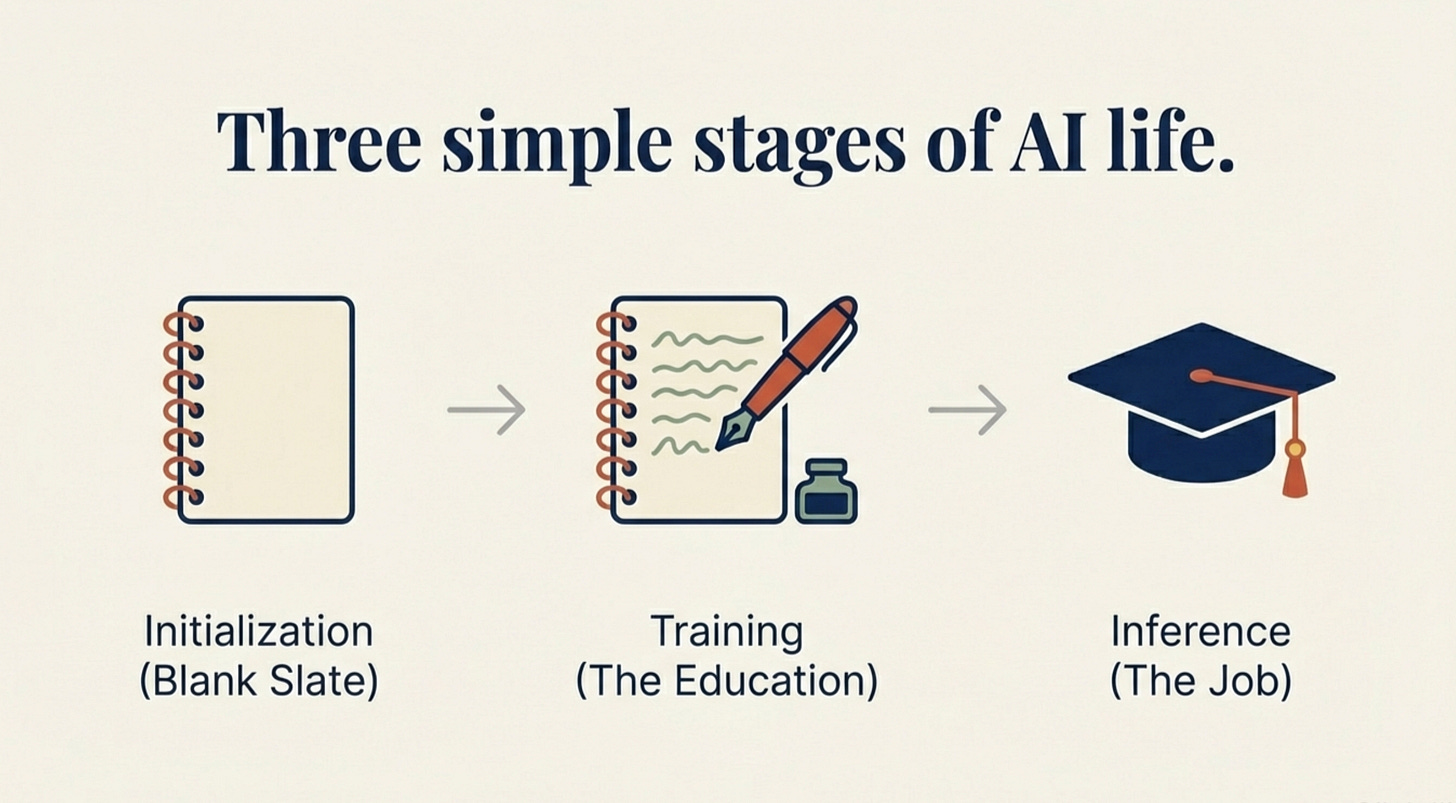

We broke down what AI models actually are in our AI Explained series — it’s the foundation you need to understand why this matters → What is a Model

Why it matters: This is the clearest sign yet that some of AI’s brightest minds think today’s chatbot approach has a ceiling. If LeCun is right, the AI that eventually understands your physical world — your home, your car, your body — won’t be built on language models at all.

Sources: TechCrunch | Reuters | The Verge

2. Microsoft Is Plugging Anthropic’s Claude Directly Into Office Software

Microsoft just announced Copilot Cowork — a new tool built on Anthropic’s Claude technology that lets AI agents handle complex, multi-step tasks inside Microsoft’s office suite. Think: building spreadsheets, creating apps, and organizing large volumes of data with limited human oversight. It’s arriving later this month for early-access users.

This is a big deal for two reasons. First, Microsoft is now offering Claude alongside OpenAI’s GPT models inside its $30-per-month Copilot service — breaking what was essentially a GPT-only arrangement. Second, the way Microsoft is positioning Cowork is specifically about security: unlike Anthropic’s own Claude Cowork (which runs locally on your device), Microsoft’s version runs in the cloud with enterprise-grade data controls.

“We work only in a cloud environment and we work only on behalf of the user. So you know exactly what information it has access to,” said Jared Spataro, who leads Microsoft’s AI-at-Work efforts. His pointed message: Claude Cowork on your laptop makes companies “very uncomfortable.” Microsoft’s version is the opposite.

Why it matters: The AI agent wars are moving from demos to the tools you use at work every day. If your company uses Microsoft 365, Anthropic’s technology is about to be one click away — and Microsoft just signaled that its future isn’t tied to OpenAI alone.

3. Anthropic Sues the Pentagon — And Employees From OpenAI and Google Are Backing Them

Anthropic filed a federal lawsuit on Monday to block the Pentagon from placing it on a national security blacklist, escalating a standoff that has consumed the AI industry for the past two weeks. The company is challenging what it calls an unconstitutional retaliation for refusing to remove safety limits on Claude for military use.

But the most surprising development: employees from rival AI companies — including OpenAI and Google — filed an amicus brief supporting Anthropic’s position. These are people who work for Anthropic’s direct competitors, publicly siding with the company against the US Department of Defense. Anthropic executives warned that the blacklisting could cost the company “billions in sales” and cause lasting reputational harm.

Meanwhile, the Pentagon drama continues to backfire commercially. Claude is still breaking daily download records and topping app store charts globally. The designation that was supposed to sideline Anthropic has turned into the best brand story in tech.

Why it matters: When employees at OpenAI and Google voluntarily stand up for their competitor, it signals something bigger than one company’s fight. The AI industry is drawing a line: governments shouldn’t be able to punish companies for having safety guardrails.

Sources: TechCrunch | Reuters | The Verge

4. Zoom Launches an AI Office Suite — and AI Avatars Are Coming to Your Meetings This Month

Zoom isn’t just for video calls anymore. The company announced a full AI-powered office suite today, along with AI avatars that can represent you in meetings starting this month. Zoom is also introducing real-time deepfake detection technology for meetings — a feature that acknowledges the obvious risk of putting AI-generated faces in business calls.

The avatars come in both realistic and stylized versions, letting users send an AI version of themselves to meetings they can’t attend in person. The office suite adds document creation, spreadsheet tools, and presentation building — all powered by AI — positioning Zoom as a direct competitor to Microsoft and Google’s workspace products.

Why it matters: The “AI in every meeting” era just got very real, very fast. When your colleague’s face on a Zoom call might be AI-generated, the line between “attending” and “not attending” a meeting gets blurry in ways we haven’t had to think about before.

Sources: TechCrunch

Quick Hits

-

Google expands Gemini across Workspace: New AI capabilities are rolling out to Docs, Sheets, Slides, and Drive, making the apps “more personal and capable.” (TechCrunch)

-

France bets on nuclear power for AI: President Macron announced plans to use France’s nuclear energy infrastructure to power AI data centres, positioning the country as Europe’s AI energy hub. (Reuters)

-

Nscale hits $14.6 billion valuation: The Nvidia-backed UK AI infrastructure startup raised $2 billion in its latest round, with former Meta executives Sheryl Sandberg and Nick Clegg joining the board. (Reuters)

-

Meta’s deepfake moderation isn’t good enough: The Meta Oversight Board is calling on the company to scale AI content labeling, including adopting the C2PA standard for detecting AI-generated content. (The Verge)

That’s it for today. The theme is impossible to miss: the AI industry is splitting into factions — companies building new foundations (LeCun), companies integrating everything (Microsoft), and companies fighting for the right to have principles (Anthropic). The question isn’t whether AI will transform your work. It’s who gets to decide the rules.

Forward this to someone who needs to stay in the loop.