A Foundation Model is a massive AI model trained on a vast amount of data — text, images, code — that can be adapted to perform a wide range of tasks, from writing emails to diagnosing diseases.

Hey Common Folks!

In our last article, we explored Generative AI — AI that can create brand new content like essays, images, music, and code. We saw it transforming customer support, education, content creation, and software development.

But here’s a question that article left open: what’s actually powering all of that?

When ChatGPT writes your email, when Claude explains your kid’s homework, when Gemini summarizes a research paper — they’re all running on the same type of technology underneath. That technology is called a Foundation Model.

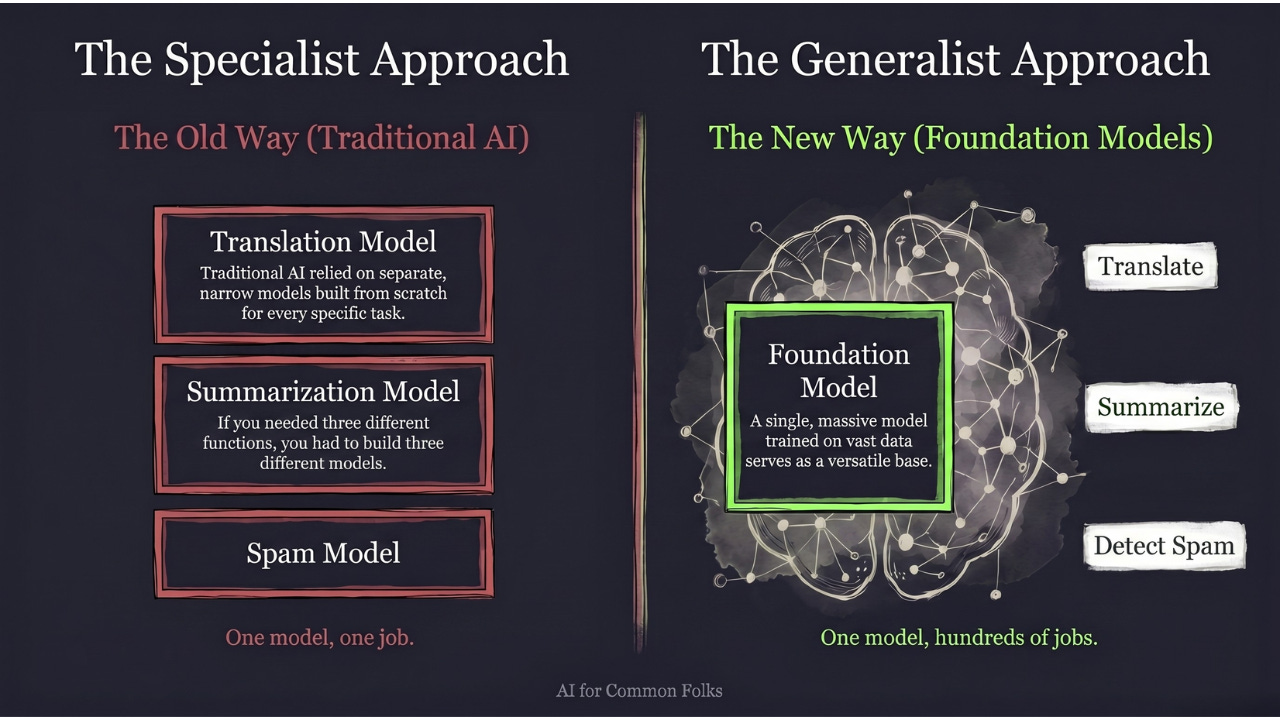

The Old Way vs. The New Way

In traditional AI (the old way), if you wanted to translate English to French, you built a “Translation Model.” If you wanted to summarize text, you built a “Summarization Model.” If you wanted to detect spam, you built a “Spam Model.”

One model, one job. Every new task meant starting from scratch.

Foundation Models changed the game completely. They are like a Swiss Army Knife — one single tool that can perform hundreds of different tasks, from writing code to composing poetry to analyzing legal contracts.

The Analogy: The “Super-Student” in the Library

Remember from our earlier article on What is a Model — we compared an AI model to a student who finished studying and walks into the exam with knowledge in their head?

Now imagine two types of students:

-

Traditional AI (The Specialist): This student only studied one book: “How to Repair a Bicycle.” Ask them to fix a bike? Perfect. Ask them to write an essay on history? They have no idea. They are specialized.

-

Foundation Model (The Generalist): This student has read almost every book in the library. History, math, coding, poetry, mechanics, medicine. Because they’ve seen so much, they’ve learned general patterns about how the world works.

Now, you can ask this “Super-Student” to fix a bike, or write a poem, or solve a math problem, or draft a legal brief. They have a broad “foundation” of knowledge that allows them to adapt to almost any request.

That’s why they’re called Foundation Models — they serve as the base upon which everything else is built. Just like you lay a concrete foundation before building a house, a hospital, or a skyscraper.

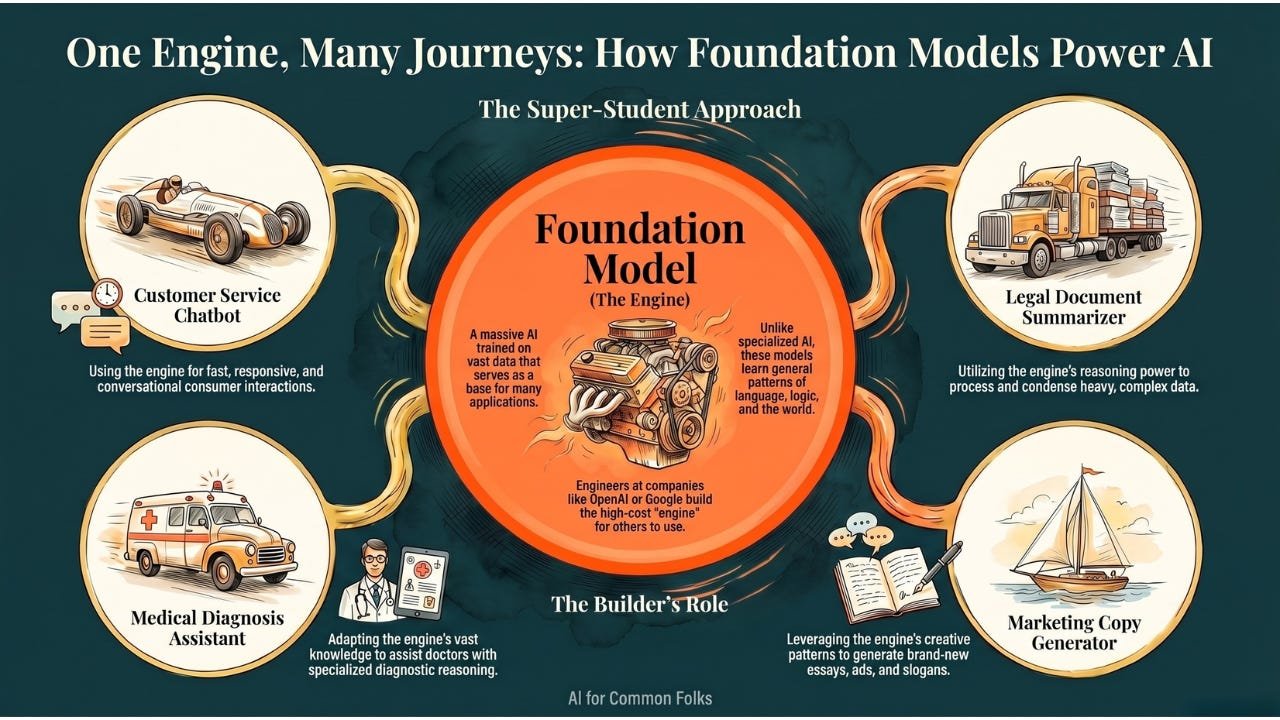

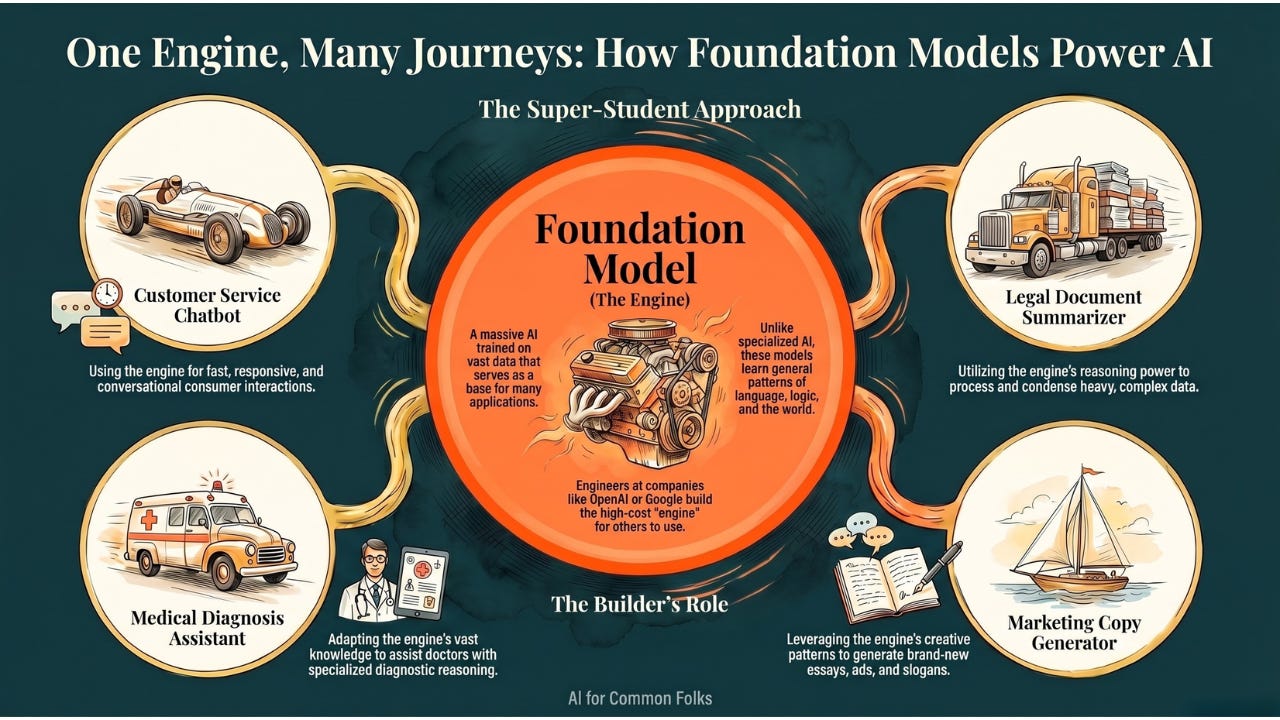

The Framework: Builders vs. Users

Understanding Foundation Models becomes much easier when you split the world into two groups: the people who make the engine, and the people who drive the car.

1. The Builder Perspective (Making the Brain)

These are the engineers at companies like OpenAI, Anthropic, Google, or Meta. They take massive amounts of raw materials — text from the internet, books, code repositories, images — and use sophisticated processes to train these models.

-

The Goal: To create a model that learns general patterns about language, logic, and the world. It’s like feeding the “student” all those books.

-

The Result: A Foundation Model (like GPT-4o, Claude, Gemini, or Llama).

2. The User Perspective (Putting the Brain to Work)

This is where most businesses and developers sit. They don’t need to know how to build the brain from scratch. They just need to know how to use it.

Think of the Foundation Model as a powerful Engine:

-

One user might take that engine and put it into a Race Car (a chatbot for customer service).

-

Another user might put it into a Truck (a tool to summarize legal documents).

-

Another might put it into a Boat (an app that generates marketing copy).

-

Another might put it into an Ambulance (an AI assistant helping doctors with diagnoses).

You don’t need to know how to build the engine. You just use its capabilities to solve your specific problem.

This is exactly what’s happening in the real world right now. Companies like Canva, Notion, Duolingo, and Khan Academy aren’t building their own Foundation Models — they’re plugging into existing ones (GPT, Claude, Gemini) and building products on top.

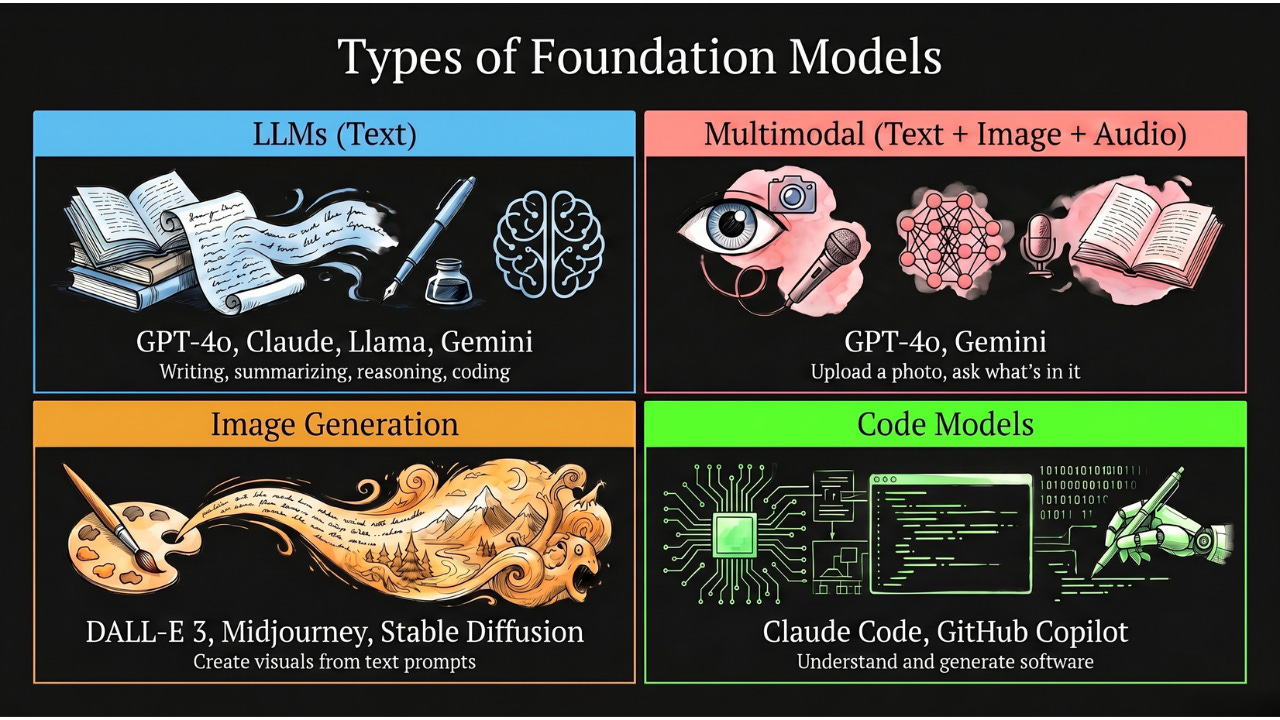

Types of Foundation Models

While Large Language Models (LLMs) are the most famous type, Foundation Models are expanding into new territories:

-

Large Language Models (LLMs): Trained on text. Good for writing, summarizing, reasoning, and coding. Examples: GPT-4o, Claude, Llama, Gemini.

-

Large Multimodal Models (LMMs): These can understand not just text, but also images, audio, and video. When you upload a photo to ChatGPT and ask “what’s in this image?” — that’s a multimodal model at work. Examples: GPT-4o (handles text + images + audio), Gemini (text + images + video).

-

Image Generation Models: Trained on images paired with descriptions. They create visuals from text prompts. Examples: DALL-E 3, Midjourney, Stable Diffusion.

-

Code Models: Specialized for understanding and generating software code. Examples: Claude (Anthropic’s model powers Claude Code), GitHub Copilot (powered by OpenAI models).

All of these are Foundation Models — massive, general-purpose brains that can be adapted to specific jobs.

Why This Matters for You

You might be thinking: “Okay, but I’m not building AI. Why should I care about Foundation Models?”

Three reasons:

1. It explains why AI suddenly got good at everything. Before Foundation Models, AI was narrow — good at one thing, useless at the rest. Foundation Models are why your AI assistant can write an email and explain physics and debug code and plan your vacation. One brain, many skills.

2. It’s why the AI race is so expensive. Training a Foundation Model costs tens of millions to hundreds of millions of dollars. That’s why only a handful of companies (OpenAI, Anthropic, Google, Meta) can afford to build them. Everyone else builds on top of them.

3. It’s the reason AI will keep getting more useful. As Foundation Models get better, every product built on top of them gets better automatically. When GPT improves, every app using GPT improves. That’s the power of a shared foundation.

The Takeaway

A Foundation Model is a general-purpose AI brain:

-

It is the engine inside the car.

-

It is the student who read every book in the library.

-

It is the Swiss Army Knife of the digital world.

It shifted AI from “specialized tools that do one thing” to “general intelligence that can be adapted to almost anything.”

And here’s the exciting part: we’re still early. The Foundation Models of 2026 are dramatically more capable than those of 2023. The ones coming in 2027 and beyond will make today’s look primitive. Understanding this technology now means you won’t be caught off guard as it transforms more of the world around you.

Coming Up

Now that you understand what a Foundation Model is — this massive, general-purpose brain — you’ve probably noticed we keep mentioning one name more than any other: GPT. GPT-3, GPT-4, ChatGPT. But what do those three letters actually stand for? And why did this particular approach become the dominant one? In our next article, we’ll decode the most famous acronym in AI and break down exactly how GPT works, letter by letter.

AI for Common Folks — Making AI understandable, one concept at a time.

Leave a Reply