Semi-Supervised Learning is training an AI using a small amount of labeled data (with answers) and a large amount of unlabeled data (without answers)—like a teacher who only has time to grade 5 papers out of 100, but the students still figure out the rest.

Hey Common Folks!

We’ve covered the two main ways computers learn:

-

Supervised Learning: The teacher stands over the student, correcting every mistake (high effort, requires answer keys).

-

Unsupervised Learning: The student is left alone with a pile of books and told to “figure it out” (low effort, but harder to guide).

But what if there’s a middle ground? What if you’re a busy teacher who only has time to grade a few papers out of a hundred? Can the computer still figure out the rest?

Yes. This is called Semi-Supervised Learning, and it’s the secret weapon of big tech companies.

The Problem: Labels Are Expensive

Why don’t we just use Supervised Learning all the time? Because giving the computer the “answer key” is expensive and exhausting.

Imagine you want to build an AI that detects rare diseases in X-rays.

The Data: You can easily download 100,000 X-ray images from a hospital database. That’s the easy part.

The Labels: To tell the computer which X-ray shows the disease, you need a highly paid doctor to look at every single image and mark it. You can’t afford to pay a doctor to label 100,000 images.

This is where Semi-Supervised Learning saves the day. You pay the doctor to label just 1,000 images, and the AI uses that knowledge to figure out the remaining 99,000.

Real-World Proof: Modern deep learning has shown you can train state-of-the-art models with surprisingly small labeled datasets—sometimes as few as 150 images per category. Semi-supervised learning pushes that efficiency even further.

The Google Photos Example

The best example—and one you probably use—is Google Photos.

Step 1 – Unsupervised Grouping: You upload 5,000 photos of your family. Google’s AI looks at them and notices, “Hey, this face appears in 500 photos. That face appears in 200 photos.” It doesn’t know who they are, but it knows they’re the same person. It groups them together using clustering.

Step 2 – The Supervised Nudge: You click on one photo and type “Dad.”

Step 3 – Semi-Supervised Magic: The AI takes that one label (”Dad”) and instantly applies it to the other 499 photos in that group.

You did 1% of the work (labeling one photo), and the AI did 99% of the work (labeling the rest). That’s Semi-Supervised Learning in action.

How Does It Work?

It follows a simple logic:

-

Cluster First: The AI looks at all the data (labeled and unlabeled) and groups similar things together. It notices that Data Point A is very similar to Data Point B.

-

Propagate the Label: If you tell the AI that “Point A is a Cat,” the AI assumes that since Point B looks exactly like Point A, then Point B must be a Cat too.

The key assumption: data points that are close to each other probably share the same label.

2026 Update: Self-Supervised Learning Takes Over

Here’s where things get exciting. Since around 2018, a cousin of semi-supervised learning has become the backbone of modern AI: Self-Supervised Learning.

What’s the difference?

-

Semi-Supervised: You give the AI a few labels, and it uses unlabeled data to fill in the gaps.

-

Self-Supervised: The AI creates its own labels from the data itself—no human labels needed at all.

Real-World Example: How ChatGPT Learned to Write

ChatGPT wasn’t trained by humans writing “correct answers” for billions of sentences. Instead, it used self-supervised learning:

-

Take a sentence: “The cat sat on the ___”

-

Hide the last word (”mat”)

-

Train the AI to predict it

-

Repeat this billions of times with internet text

The AI creates its own “quiz” from raw text, learning language patterns without anyone labeling a single sentence. This is why GPT-4, Claude, and Gemini could train on trillions of words without hiring millions of human teachers.

Why this matters: Self-supervised learning is the reason AI exploded in the 2020s. It unlocked the internet’s massive, messy, unlabeled data.

Spot It in the Wild: Where You’re Already Using It

You interact with semi-supervised and self-supervised learning every day:

-

Spotify Discover Weekly (it knows what songs you like and finds similar unlabeled music)

-

Gmail spam filter (you mark a few emails as spam; it learns patterns to catch the rest)

-

Medical diagnosis tools (doctors label a few images; AI extends that knowledge across millions)

-

ChatGPT, Claude, Gemini (all trained with self-supervised learning on massive unlabeled text)

When Semi-Supervised Learning Fails

Just like any machine learning technique, semi-supervised learning has limitations you need to watch for:

1. Garbage In, Garbage Out (Amplified)

If your small labeled dataset is biased or wrong, the AI will spread that bias across all the unlabeled data.

Example: If you label 10 photos of “doctors” and they’re all men, the AI might learn “doctor = male” and mislabel female doctors in the unlabeled set.

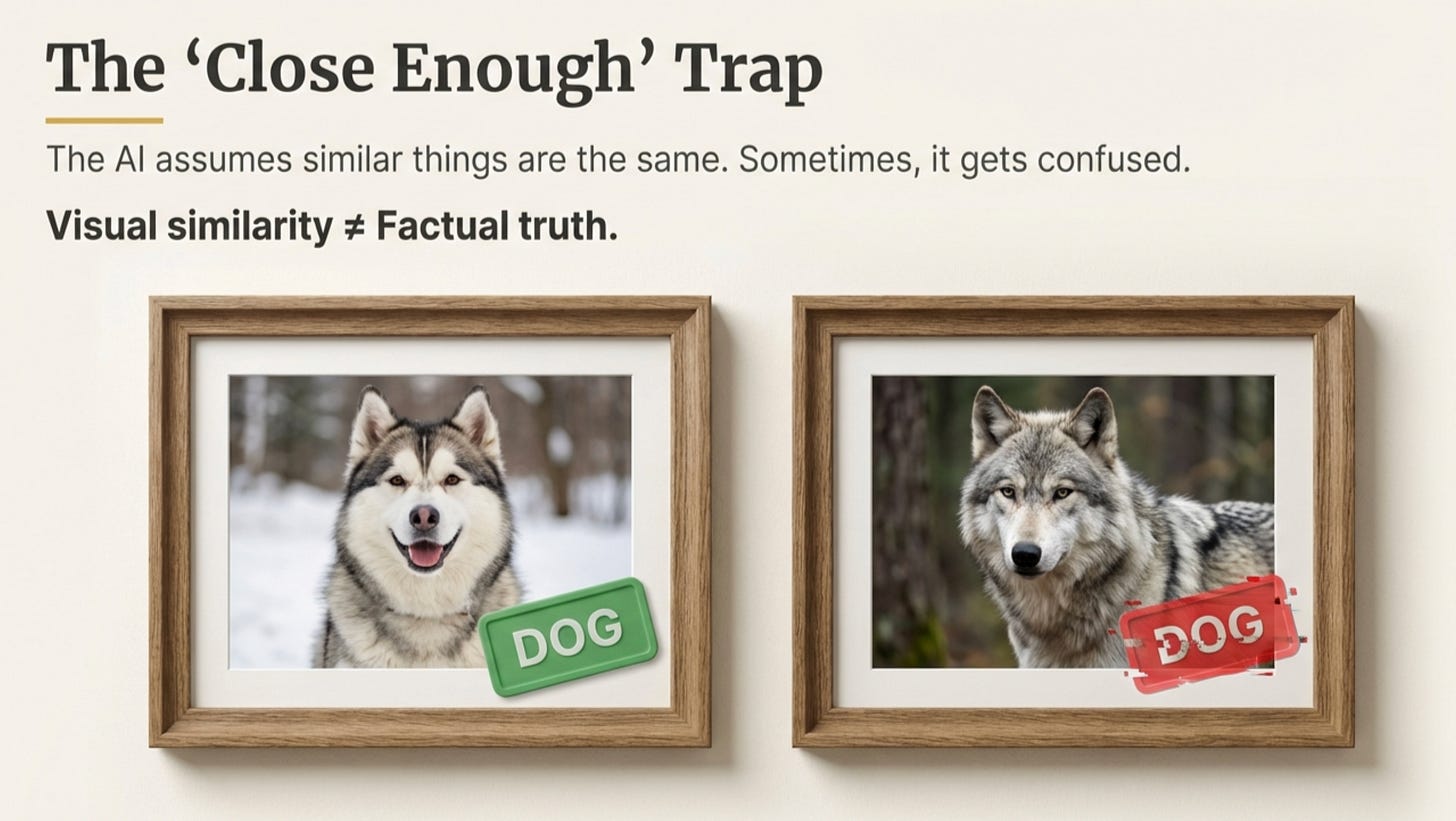

2. The “Close Together” Assumption Can Break

Semi-supervised learning assumes similar-looking things have the same label. But what if they don’t?

Example: Huskies and wolves look similar, but they’re not the same. If your labeled data only has huskies, the AI might confidently—and wrongly—label wolves as “husky.”

3. Domain Shift

If your unlabeled data comes from a different source than your labeled data, the AI can get confused.

Example: You label 100 professional X-rays (high quality, well-lit). Then you feed the AI 10,000 unlabeled phone photos of X-rays (blurry, poor lighting). The patterns don’t transfer well.

The Fix: Always check your unlabeled data’s quality and diversity before letting the AI loose on it.

Why This Matters Now

We live in a world where data is cheap, but labels are expensive.

-

We have billions of tweets, but we don’t know the sentiment of all of them.

-

We have millions of hours of YouTube video, but we don’t have transcripts for all of them.

-

We have endless medical images, but we can’t afford experts to label every one.

Semi-Supervised Learning allows companies to unlock the value of massive, messy datasets without hiring thousands of humans to manually tag every single file.

And Self-Supervised Learning is why AI could suddenly read, write, code, and converse in 2023-2026 without needing labeled “correct answers” for every sentence on the internet.

Try It Yourself

Want to see semi-supervised learning in action? Here’s a simple experiment:

-

Open Google Photos (or Apple Photos)

-

Upload 50+ photos with at least 2-3 people appearing multiple times

-

Wait for the app to cluster faces

-

Label just one photo of each person

-

Watch the AI instantly label dozens more

That’s semi-supervised learning working for you—right on your phone.

The Takeaway

Semi-Supervised Learning is the “work smarter, not harder” approach to AI.

-

Supervised: Requires a teacher for every lesson.

-

Unsupervised: No teacher at all.

-

Semi-Supervised: The teacher gives a few examples, and the student figures out the rest by association.

-

Self-Supervised (2026 Bonus): The student creates their own practice tests from the material itself.

This is how your phone organizes your memories, how medical AI detects diseases without bankrupting the hospital, and how ChatGPT learned to write without anyone grading billions of essays.

AI for Common Folks — Making AI understandable, one concept at a time.

Learn More

Want to dive deeper into practical AI? Check out the free fast.ai course, which inspired several examples in this article and teaches you to build real AI applications from day one.

Previous articles in this series:

Leave a Reply