Good morning, Microsoft is threatening to sue OpenAI and Amazon over a $50 billion cloud deal, a mystery AI model has the developer community asking “Is that you, DeepSeek?”, and Alibaba just restructured its entire company around AI agents. Here’s what happened 👇

1. Microsoft Threatens Legal Action Over $50 Billion Amazon-OpenAI Cloud Deal

Microsoft is considering suing both its partner OpenAI and Amazon over a $50 billion deal that it believes violates its exclusive cloud agreement with the ChatGPT maker. Last month, Amazon and OpenAI signed an agreement making AWS the exclusive third-party cloud provider for Frontier, OpenAI’s upcoming enterprise platform for building AI agents. Microsoft’s deal with OpenAI requires all of OpenAI’s models to be accessed through Azure.

“We know our contract,” a person familiar with Microsoft’s position told the Financial Times. “We will sue them if they breach it. If Amazon and OpenAI want to take a bet on the creativity of their contractual lawyers, I would back us, not them.” The companies are reportedly in talks to resolve the dispute before Frontier launches.

Why it matters: This is the clearest sign yet that the partnership holding the AI industry together is fraying. Microsoft invested $11 billion into OpenAI and built its entire AI strategy around exclusive access. If OpenAI can route around that deal through Amazon, it changes the power dynamics of the entire cloud AI market. For everyday users, this battle will determine which platforms get the best AI tools first.

Sources: Reuters

2. A Mystery AI Model Has Developers Buzzing: Is This DeepSeek’s Next Blockbuster?

A powerful AI model called “Hunter Alpha” appeared anonymously on the developer platform OpenRouter last week, and nobody knows who made it. During tests, it described itself as “a Chinese AI model primarily trained in Chinese” with a training cutoff of May 2025, the same as DeepSeek’s chatbot. The model boasts 1 trillion parameters and a context window of up to 1 million tokens, and it’s available for free.

Those specs match expectations for DeepSeek’s upcoming V4 model, which Chinese media has reported could launch as early as April. “The chain-of-thought pattern is probably the strongest signal,” said one AI engineer who analyzed the model. “Reasoning style is hard to disguise and tends to reflect how a model was trained.” The model has already processed over 160 billion tokens since its March 11 launch.

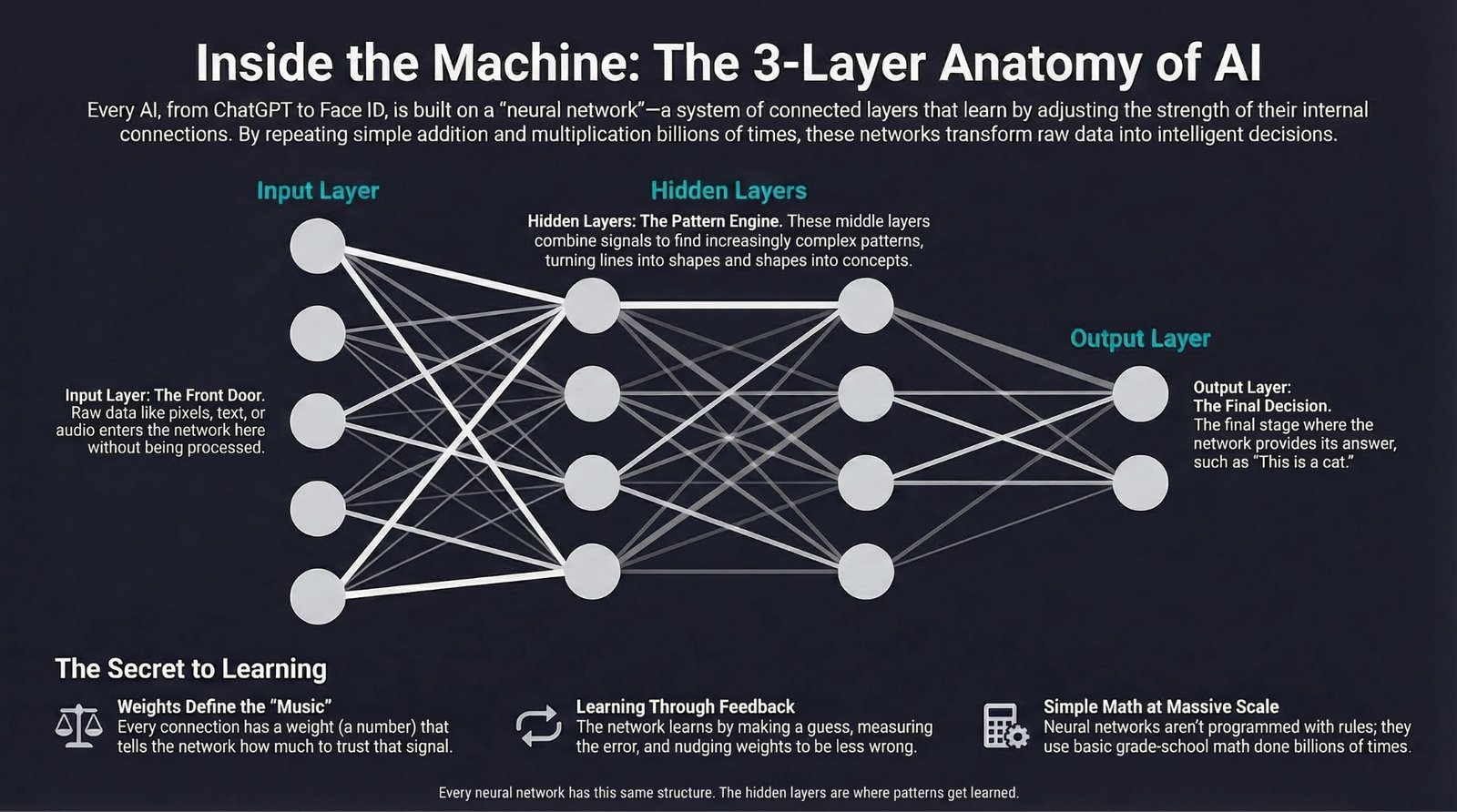

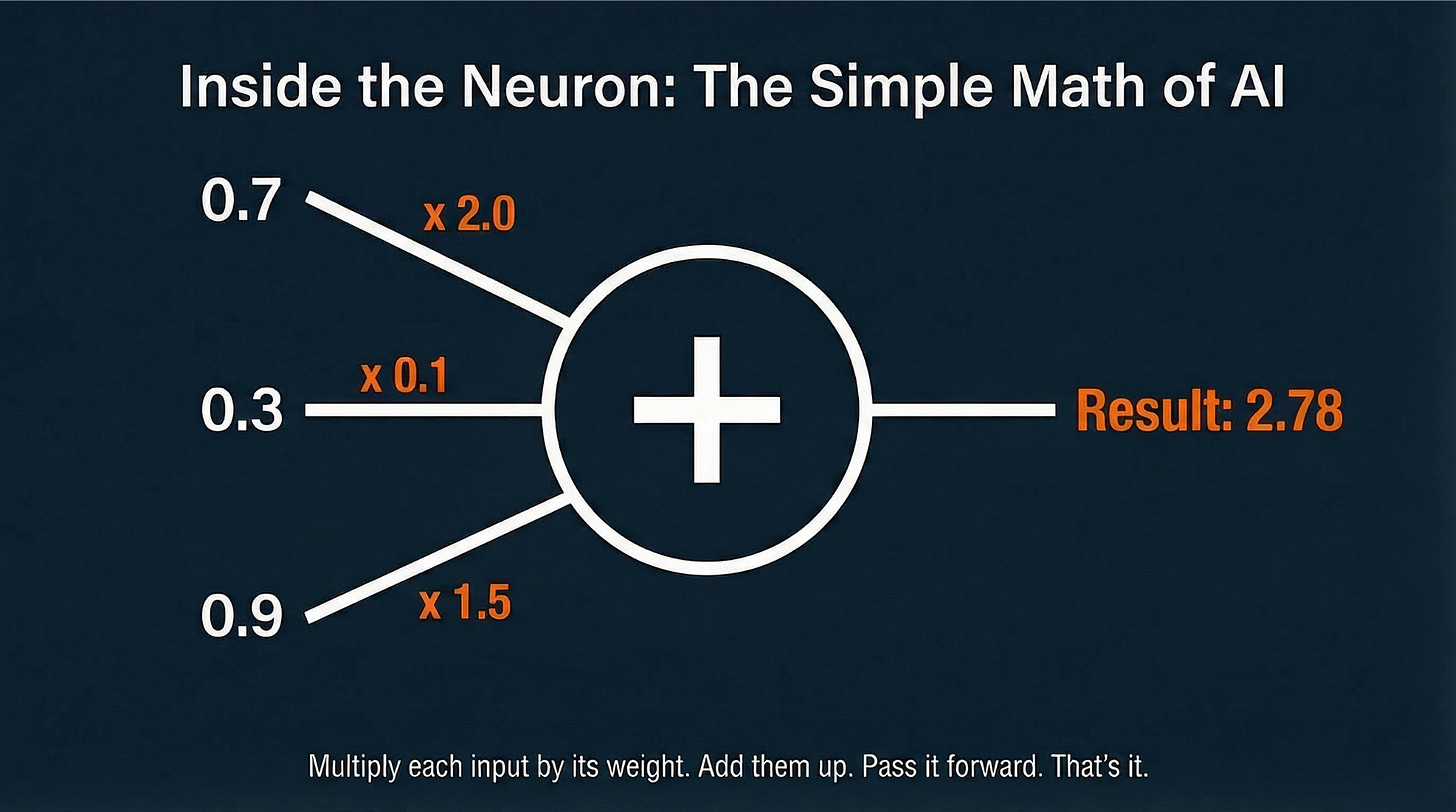

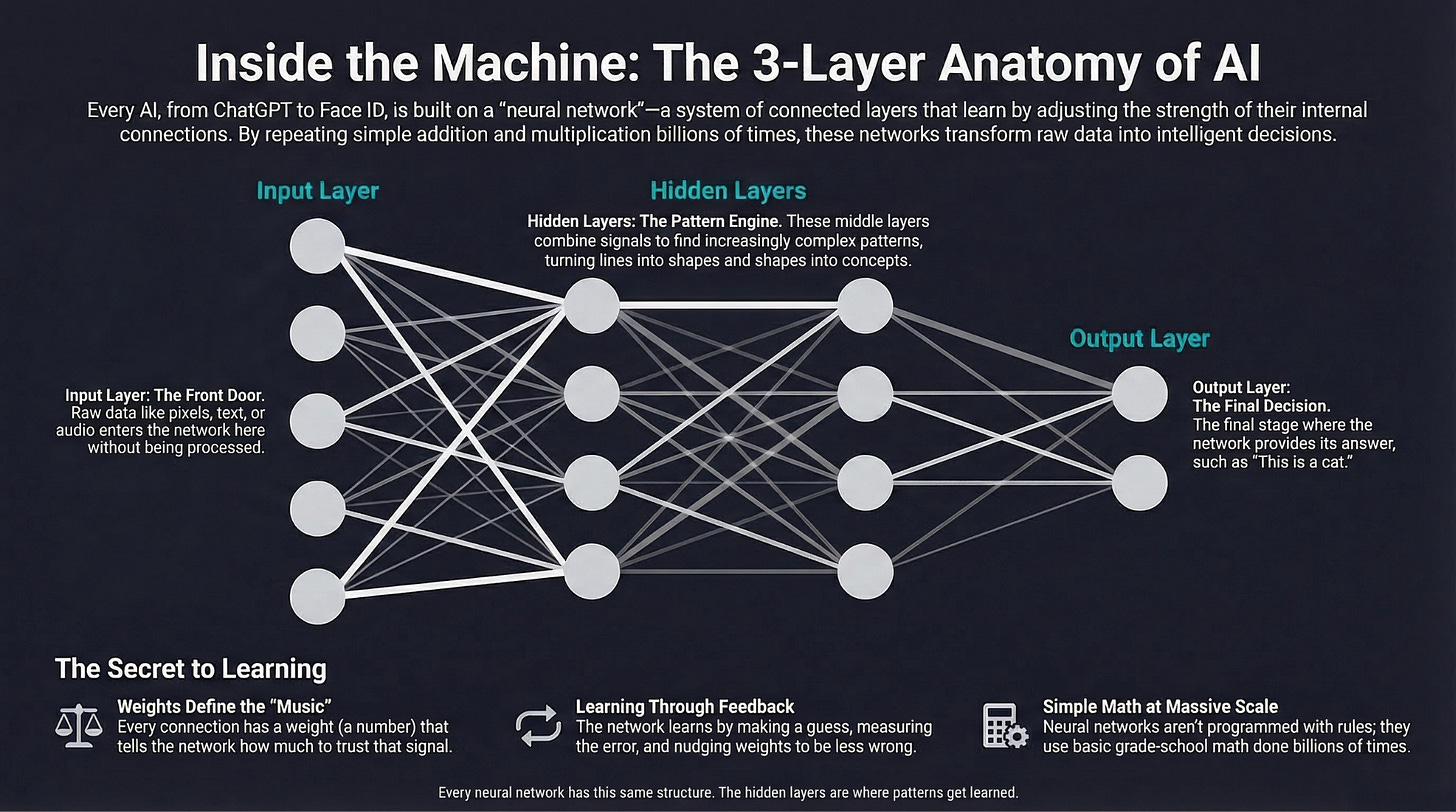

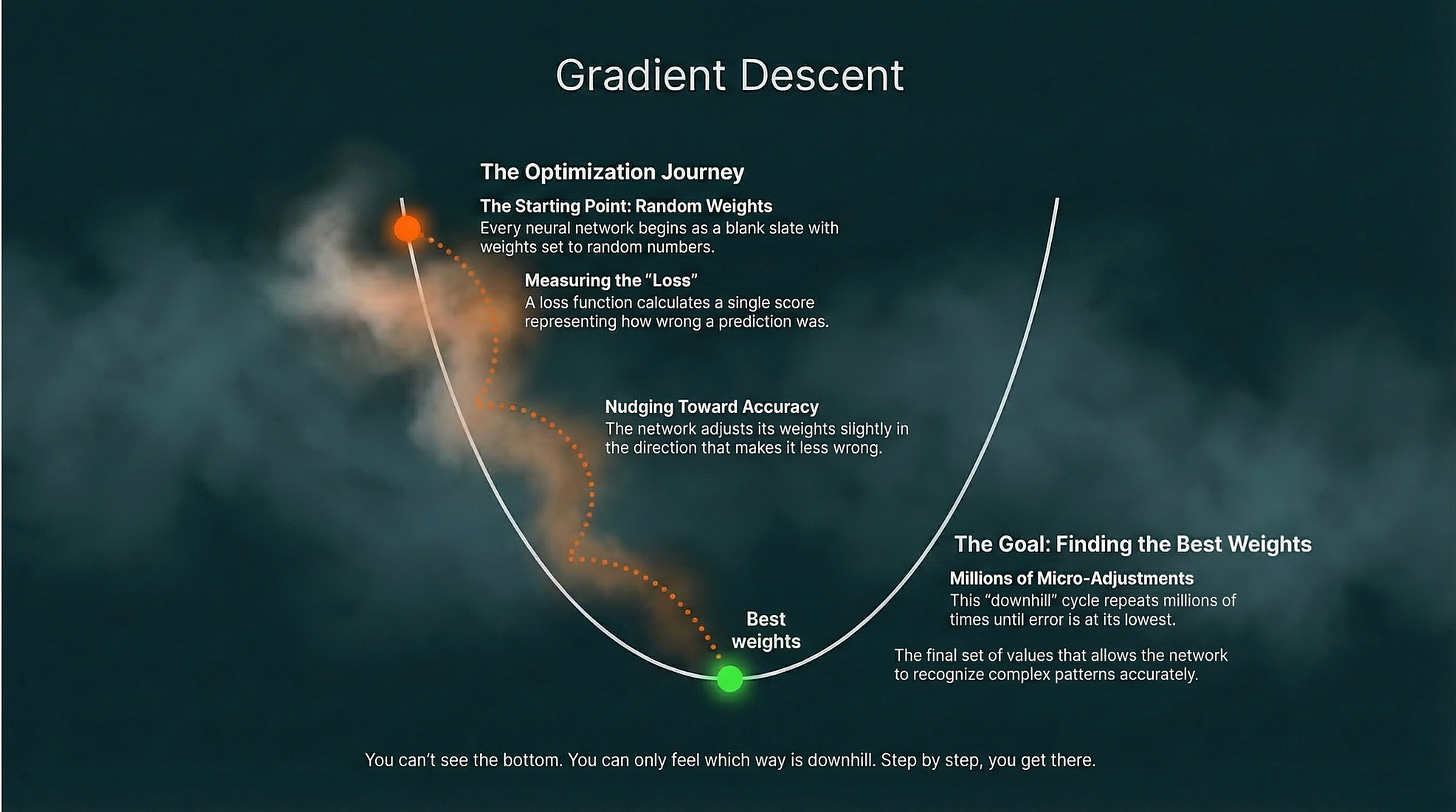

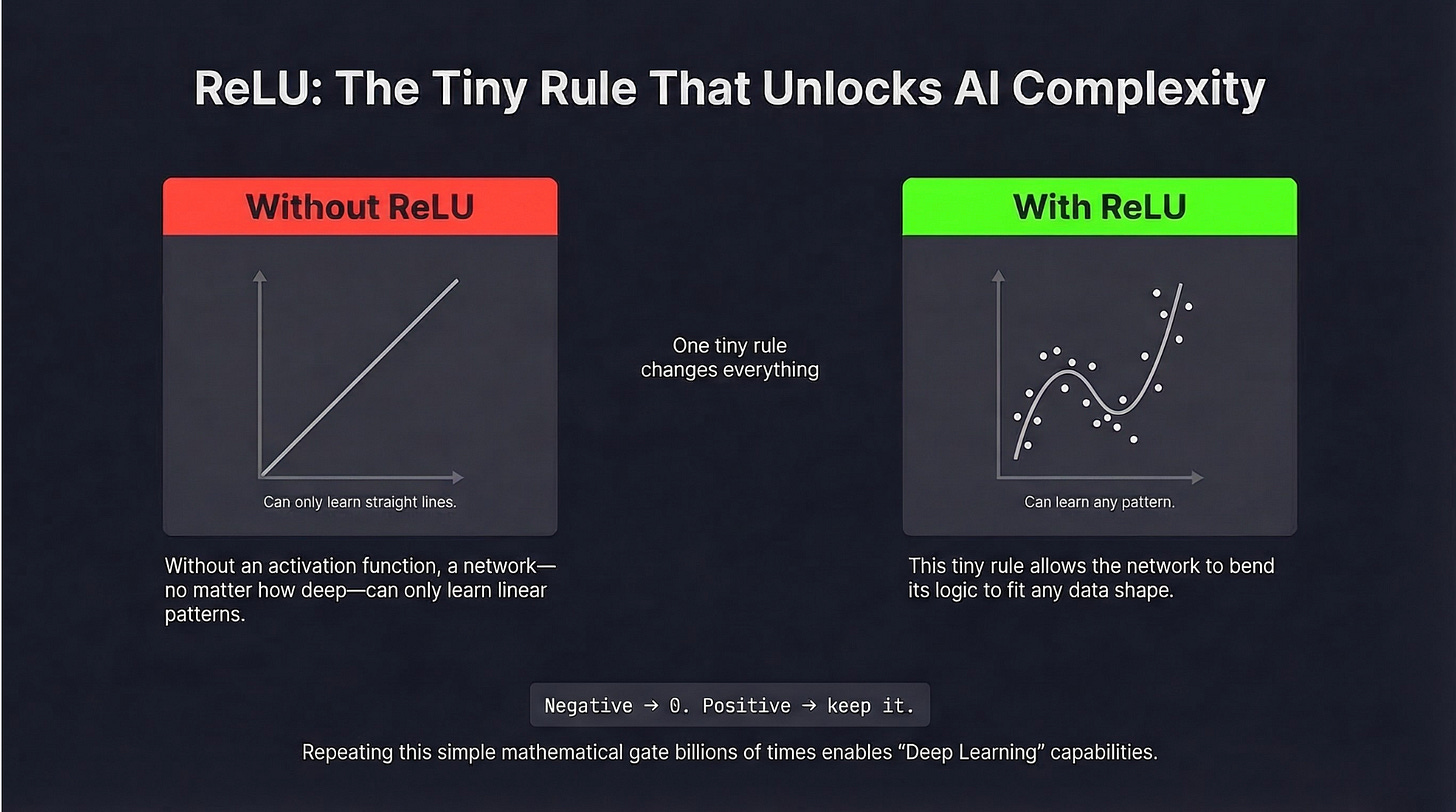

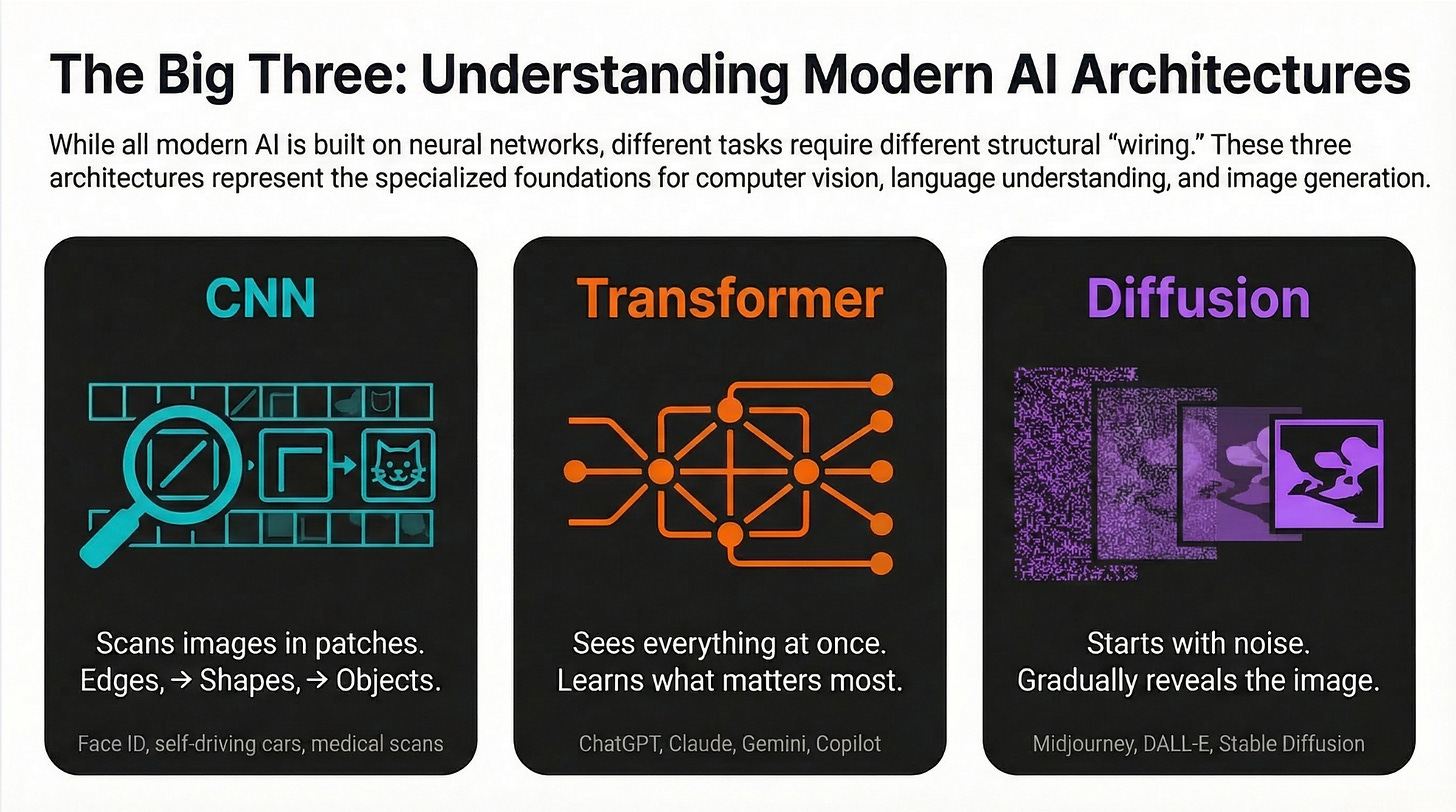

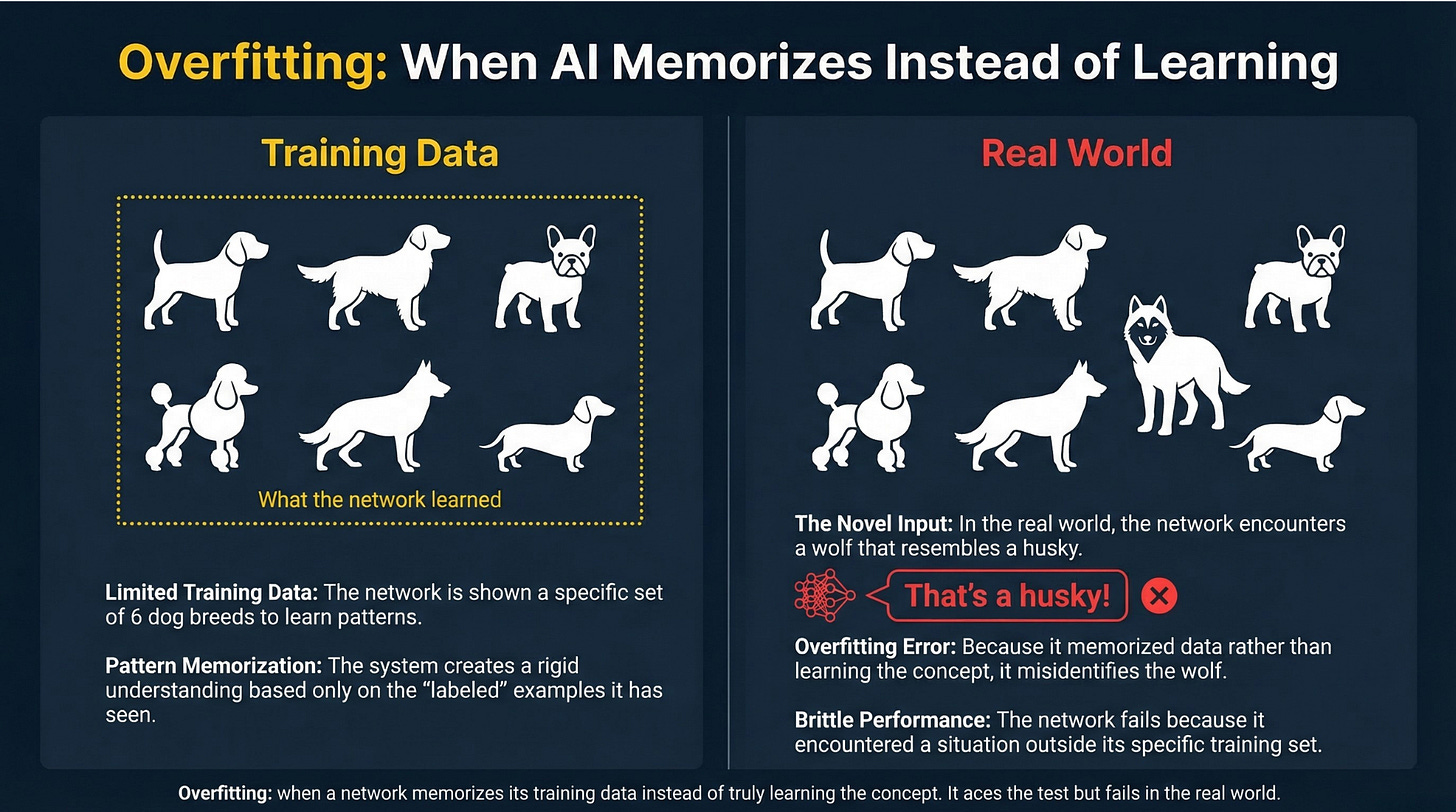

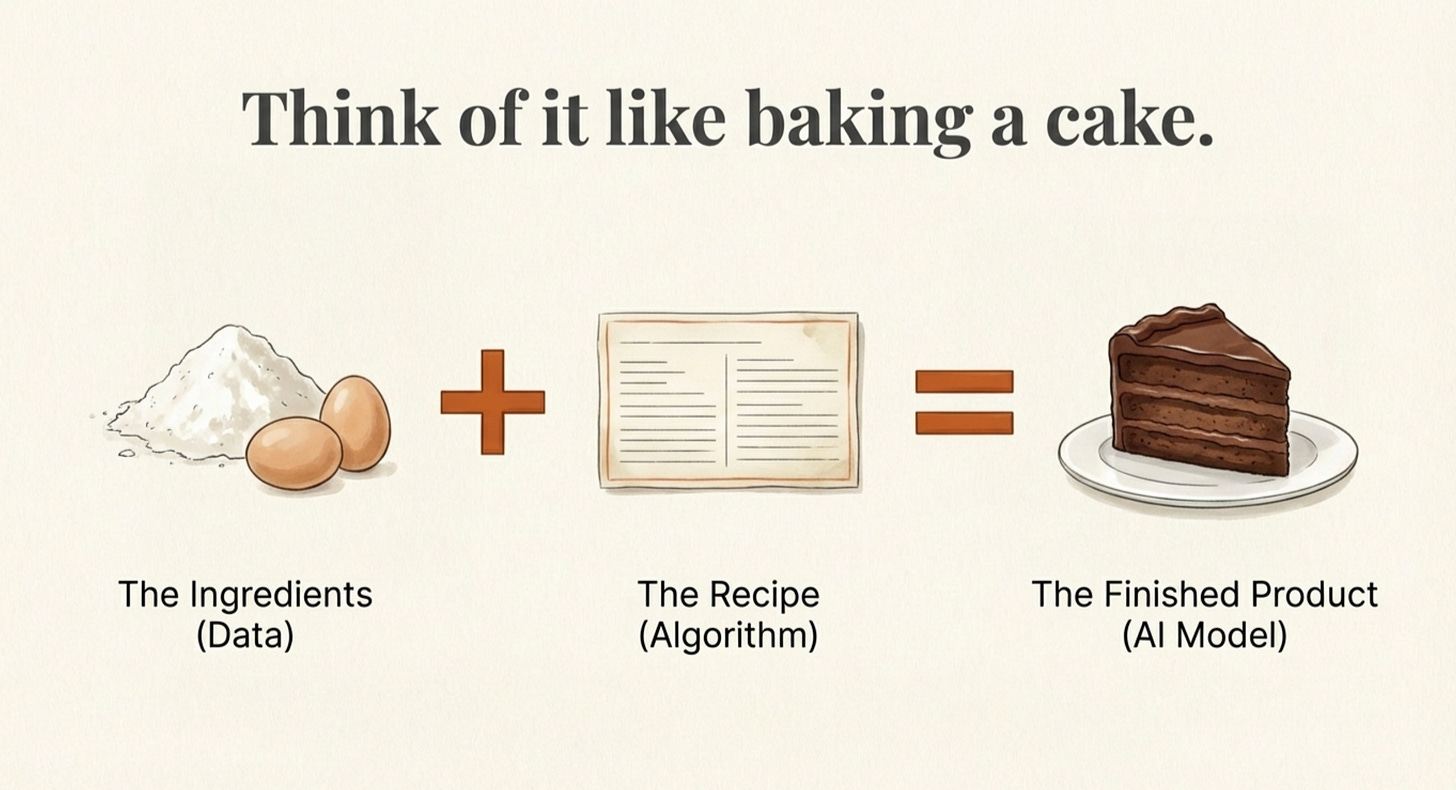

Why it matters: If this is DeepSeek V4, it would be another jaw-dropping move from the Chinese startup that shocked the industry earlier this year with models that rival American labs at a fraction of the cost. A 1-trillion-parameter model with free access and million-token context would put serious pressure on every paid AI service. We explained what AI models actually are in our AI Explained series if you want to understand what these numbers mean.

Sources: Reuters

3. Alibaba Restructures Around AI Agents, Launches Enterprise Platform “Wukong”

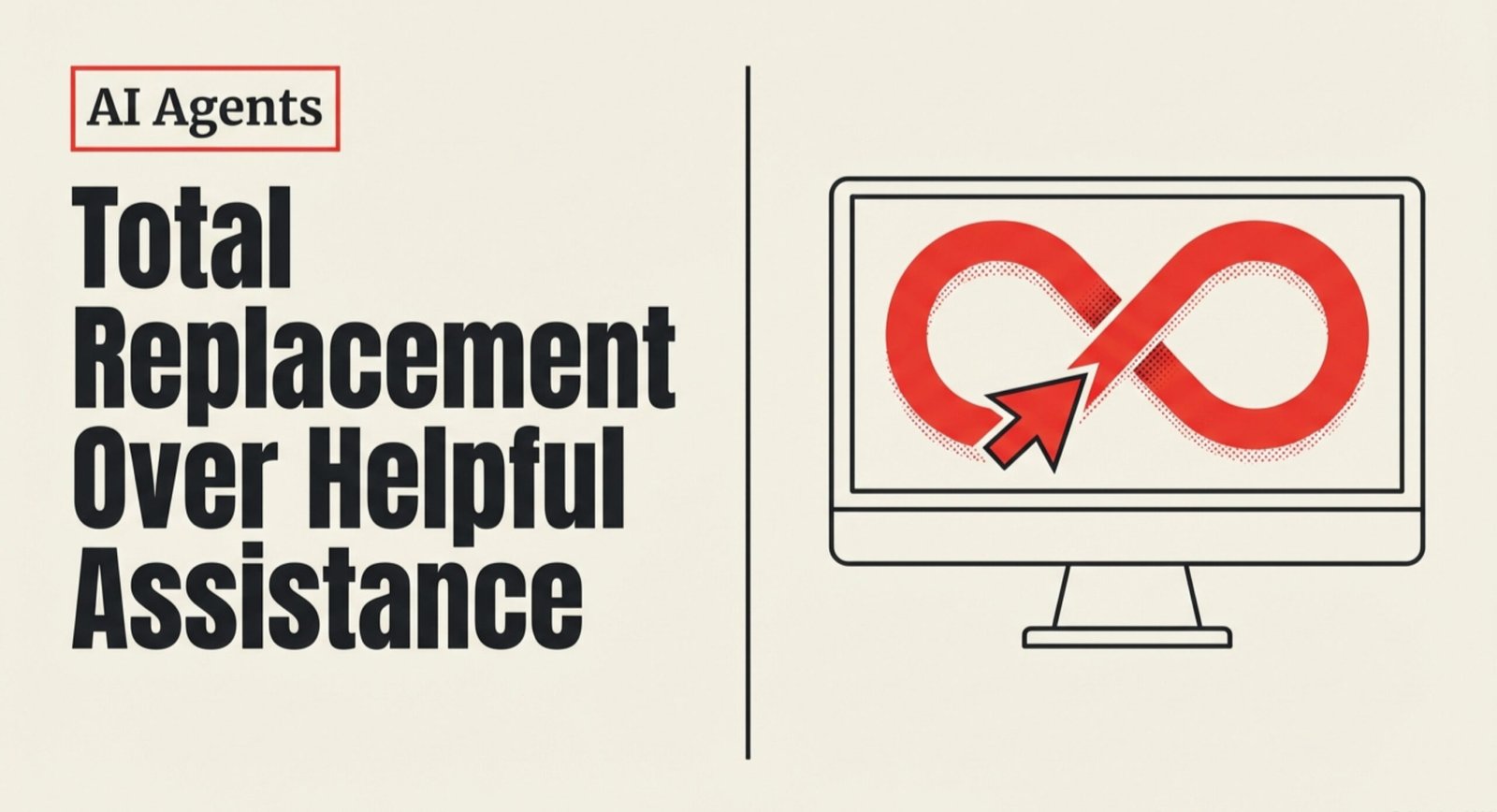

Alibaba is making its biggest bet yet on AI agents. The $325 billion company separated its AI businesses from its cloud arm and formed a new “Token Hub” business group led by CEO Eddie Wu. The move signals a shift from simple chatbots to AI agents that can actually do things across Alibaba’s massive ecosystem of e-commerce, food delivery, travel, and movie ticketing.

On Tuesday, Alibaba also launched Wukong, an enterprise platform where multiple AI agents can coordinate to handle tasks like document editing, meeting transcription, and research. “Think of it like having OpenAI, Amazon, Stripe, Uber, DoorDash, Ticketmaster, Expedia, Netflix and Charles Schwab all integrated into one text box,” said one former Alibaba executive.

Why it matters: While American companies are still arguing over cloud contracts, Chinese tech giants are racing to build AI agents that handle your entire daily life through a single chat interface. Alibaba’s ecosystem advantage is real: no other company owns the chatbot, the shopping platform, the delivery fleet, and the cloud infrastructure all at once. This is what an AI-native company looks like when the pieces are already in place.

Sources: Reuters

4. Google Opens Personalized Gemini AI to All US Users for Free

Google announced that all US users can now access its “Personal Intelligence” feature, which was previously limited to paid subscribers. The feature connects your Google apps, including Gmail, YouTube, Google Photos, and Search, to Gemini so it can personalize its responses without you having to explain your context in every prompt. Gemini might offer shopping recommendations based on your purchase history or troubleshoot your devices based on info it already has.

The feature is opt-in only and users can disconnect apps at any time.

Why it matters: Google just made its most powerful AI feature free for everyone. The trade-off is clear: give Google even more access to your data, and it gives you an AI that actually knows you. This is the kind of move that could pull users away from ChatGPT and Claude, which don’t have access to your email, photos, and search history. Whether that trade-off is worth it depends entirely on how you feel about Google knowing everything about you.

Sources: The Verge, TechCrunch

Quick Hits

-

Samsung and AMD signed a partnership on AI memory chips and are exploring a foundry deal, continuing the wave of GTC-week chip alliances. (Reuters)

-

Nvidia got Beijing’s approval to sell H200 chips in China and is adapting its Groq-licensed chips for the Chinese market, navigating the tightrope between U.S. export controls and its biggest international customer. (Reuters)

-

Mistral launched “Forge,” a platform letting enterprises train custom AI models from scratch on their own data, positioning the French startup as the anti-OpenAI for companies that want to own their AI. The company is on track to hit $1 billion in annual recurring revenue this year. (TechCrunch)

-

The Pentagon is developing alternatives to Anthropic for military AI applications, signaling the defense establishment wants multiple AI suppliers rather than depending on any single company. (TechCrunch)

-

World launched a tool to verify that humans are behind AI shopping agents, using iris-scan backed tokens to stop agent swarms from overwhelming online systems. (Ars Technica)

That’s it for today. The AI industry is splitting into two parallel races: in the U.S., the biggest companies are lawyering up over who controls the cloud infrastructure, while in China, they’re skipping the legal battles and building the AI-powered everything apps that might define how people actually use this technology.

Forward this to someone who needs to stay in the loop.